AI GTM Orchestration: Why Sales and Marketing Are Becoming One Revenue Learning System

Everything we know about software, workflows, and functional roles is collapsing into a more natural state of flow.

For the last decade, most business software was built around rigid workflows.

A salesperson lived in Salesforce, Outreach, Gong, LinkedIn, and Slack.

A marketer lived in HubSpot, Canva, Webflow, analytics tools, and ad platforms.

A customer success manager lived in call recordings, product analytics, support tickets, spreadsheets, and CRM notes.

Each tool had a fixed shape.

But the actual problems inside a company do not have fixed shapes.

A company does not wake up and say, “I need to send 200 more emails.”

It says, “I need more pipeline.”

“I need to retain this customer.”

“I need to take market share.”

The work is not the tool. The work is the problem.

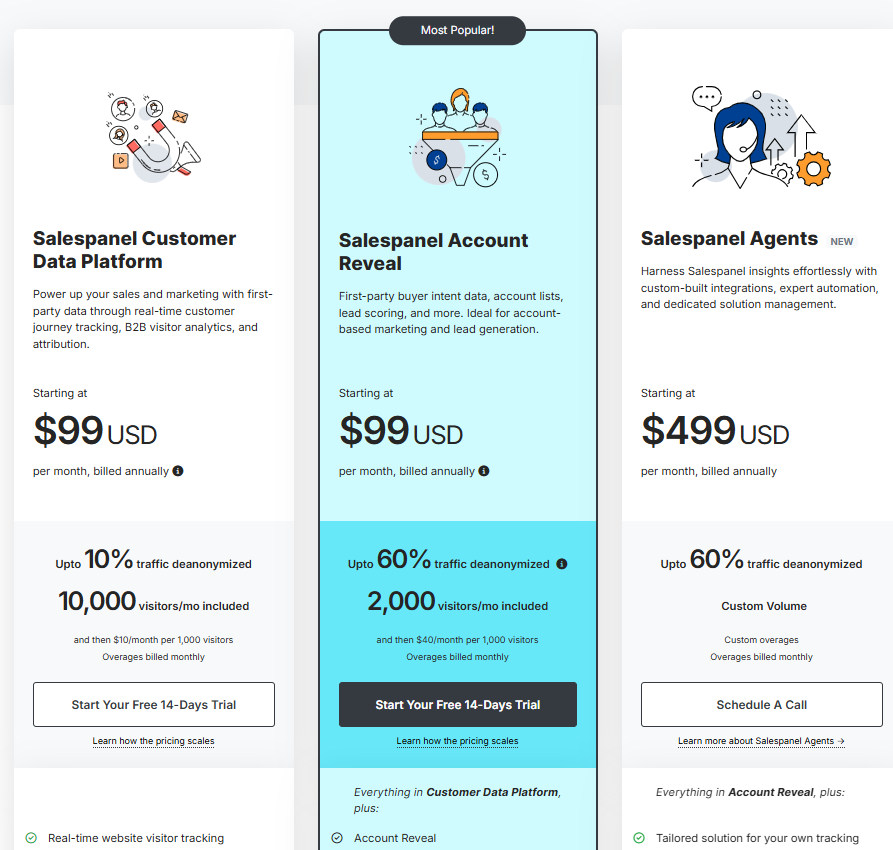

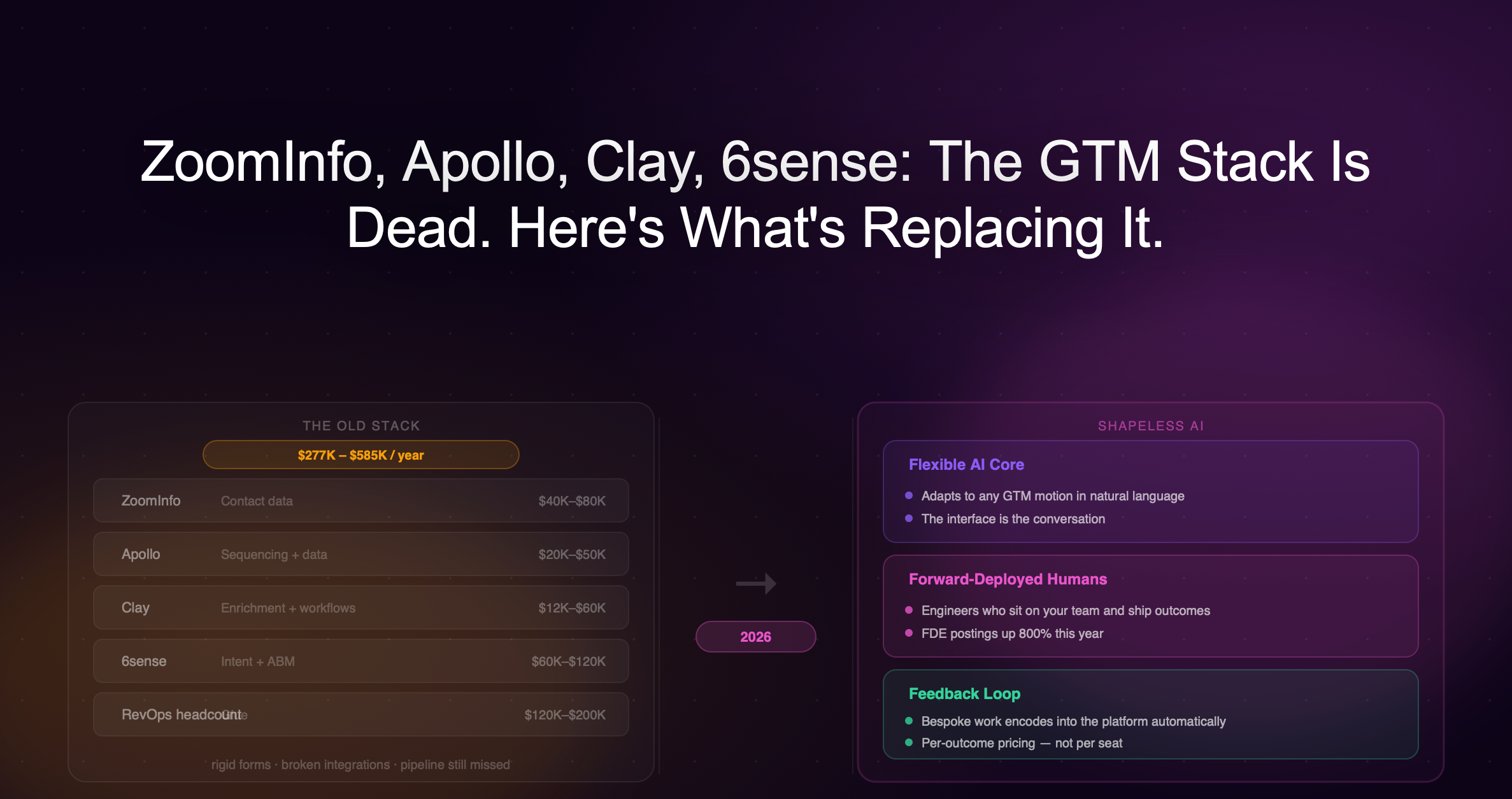

The old GTM stack was built around departments, not outcomes.

Marketing had marketing automation.

Sales had CRM and sales engagement.

RevOps had routing, enrichment, attribution, and reporting.

Customer success had support tickets, call notes, product usage, and renewal workflows.

But the customer does not experience your company in departments.

The customer experiences one journey.

They see an ad. They visit the website. They read content. They compare vendors. They talk to sales. They get nurtured.

The company split that journey into departments because humans needed boundaries to manage the work.

AI does not need the same boundaries.

Once agents can read signals, retrieve context, recommend actions, and execute workflows, the organizing principle stops being the department and starts being the outcome.

And this is the point I keep coming back to when I think about how to position Warmly, a company that services GTM teams, myself as a leader, or just as a human preparing for the future.

I like to deconstruct the state today and model where I think the future is going until it becomes obvious what is going to happen next. Then use that reasoning to guide what we should do today.

Even if the eventual outcome or timing is incorrect, the reasoning is what I want to preserve and get better at refining. Because if you have a good model of what something is and how it behaves, then you can do a better job of using it or operating around it.

The model I keep arriving at is this:

Software is moving from rigid tools into fluid problem-solving loops.

There are four AI scaling laws that explain why this is happening:

Pre-training scaling

Post-training scaling

Test-time scaling, or “long thinking”

Agentic scaling, or “AI talking to AI”

Each one opens up a new dimension where more compute, more data, or more system design creates more capability.

Together, they explain why AI is moving from a text generator into a new operating layer for problem solving.

Pre-training scaling

Pre-training is the very expensive procedure of teaching a model general intelligence through historical next-token prediction.

Input context and predict what comes next.

One mental model is:

Pre-training = 95% of broad knowledge and compute.

Post-training = smaller but high-leverage shaping phase.

This is the original scaling law: train on massive amounts of text, code, images, video, and structured knowledge, and the model develops broad general intelligence.

In pre-training, foundational labs like Anthropic and OpenAI choose domains that have strong verifiability, which means it is easy to confirm whether the answer is right or wrong given an input and output.

Coding is a good example because you can see if code compiles or works to specification.

They also choose domains they want to do well in because they believe those domains will provide the most economic impact.

They do not need AI to be good at everything.

Training is expensive, and too many domains leads to a heavier, more expensive, higher-latency model.

This is what leads to the jaggedness of models.

They are good at some things and not others.

If the domain you work in is operating in the circuits that are part of the foundational model’s reinforcement learning loop, you domain flourishes with AI.

If you are operating in a domain out of the data distribution, the model will not perform as well.

But we have no idea what OpenAI is training the models on.

They do not give us a manual.

We know they care about certain domains like math, science, and coding.

But for domains that have low verifiability, are less important to the foundational model labs, or are highly niche, this is where post-training comes in to round out the long tail.

Post-training scaling

Post-training is a less expensive procedure and more fine-tuned to a specific task or how you want a job to be done.

Models get more useful after pre-training by learning from feedback, examples, preferences, synthetic data, tool traces, and real-world outcomes.

This is how a raw model can become an assistant, a coder, an analyst, a support agent, or a GTM agent.

Companies like Warmly fine tune our own models for our AI autopilot agents to reason through the next best GTM actions, how to write good emails, how to handle objections, and how to have human-like and effective conversations in the context of GTM or your organization.

To see if your post-trained model is good, you can recreate the world GTM model at the time and see how many accounts would convert to the next stage given the sequence of actions enacted on them and the context around these accounts.

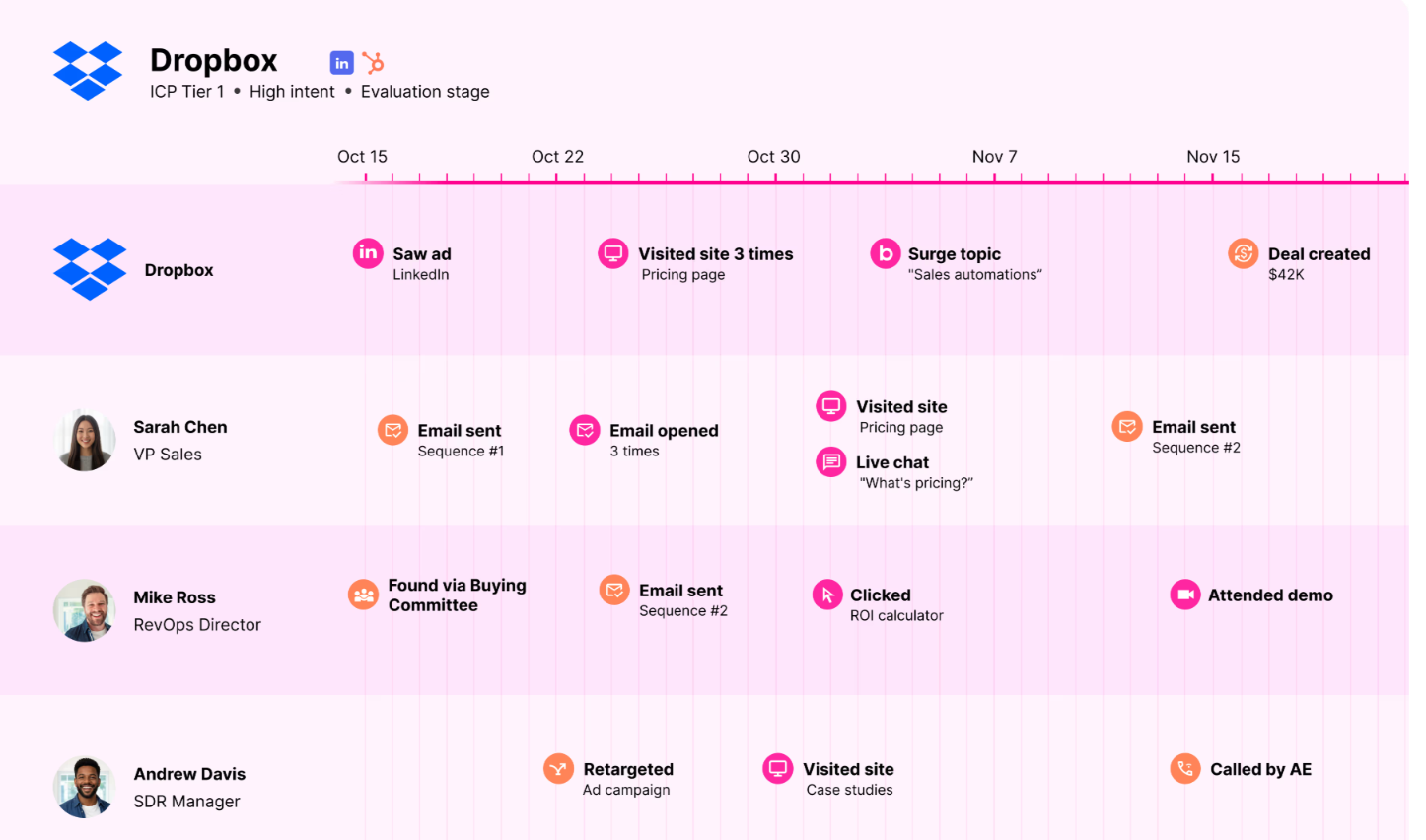

The buyer got an email, saw an ad, company was hiring, was ICP or not. Then you see whether the model can justify the right reasoning.

Both pre-training and post-training have to do with fine tuning the model itself by feeding in input, output, verified outcome and feedback data and adjusting the weights of the model.

The next scaling law is different.

It happens while the model is working.

Test-time scaling, or long thinking

Test-time scaling is the idea that models get better by spending more compute while solving the problem, not just during training.

Pre-training and post-training happen before the model is deployed.

The model weights are updated.

The model becomes generally smarter or more useful.

Test-time scaling happens at runtime when the model needs to do something, like figure out the next best action for an account given a new signal.

The model weights do not change.

Instead, the model is given more time, more context, more tools, more attempts, and more verification while it is working on the task.

For simple tasks, the model can answer quickly.

If you ask it to rewrite a sentence or summarize a paragraph, it does not need much thinking.

But for high-value work, the model needs to reason, retrieve, plan, compare, verify, and sometimes try multiple paths before choosing an answer.

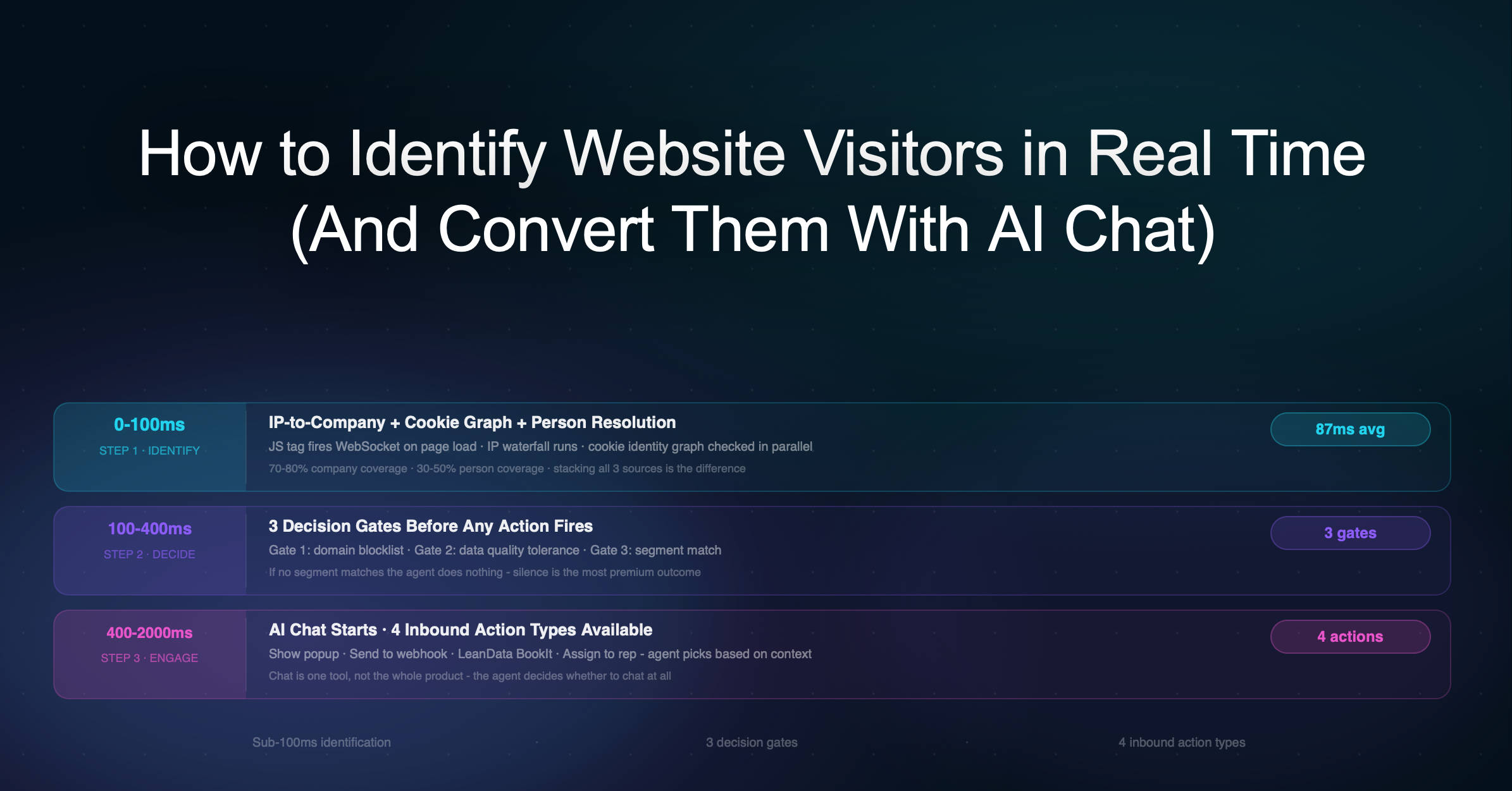

A GTM example makes this obvious.

A shallow AI system might see:

Account visited pricing page. Send email.

A test-time scaled system thinks longer:

Who is the company? Are they in our ICP? Have they talked to us before? Which pages did they visit? Which visits matter and which ones are noise? Who is on the buying committee? Should we trigger chat, notify an AE, launch outbound, suppress the account, retarget them, or wait? What message should be sent? Should the AI execute automatically or ask for human approval?

A model may have a million-token context window, but that does not mean you should dump a million website visits, CRM notes, emails, call transcripts, support tickets, and product events into the prompt.

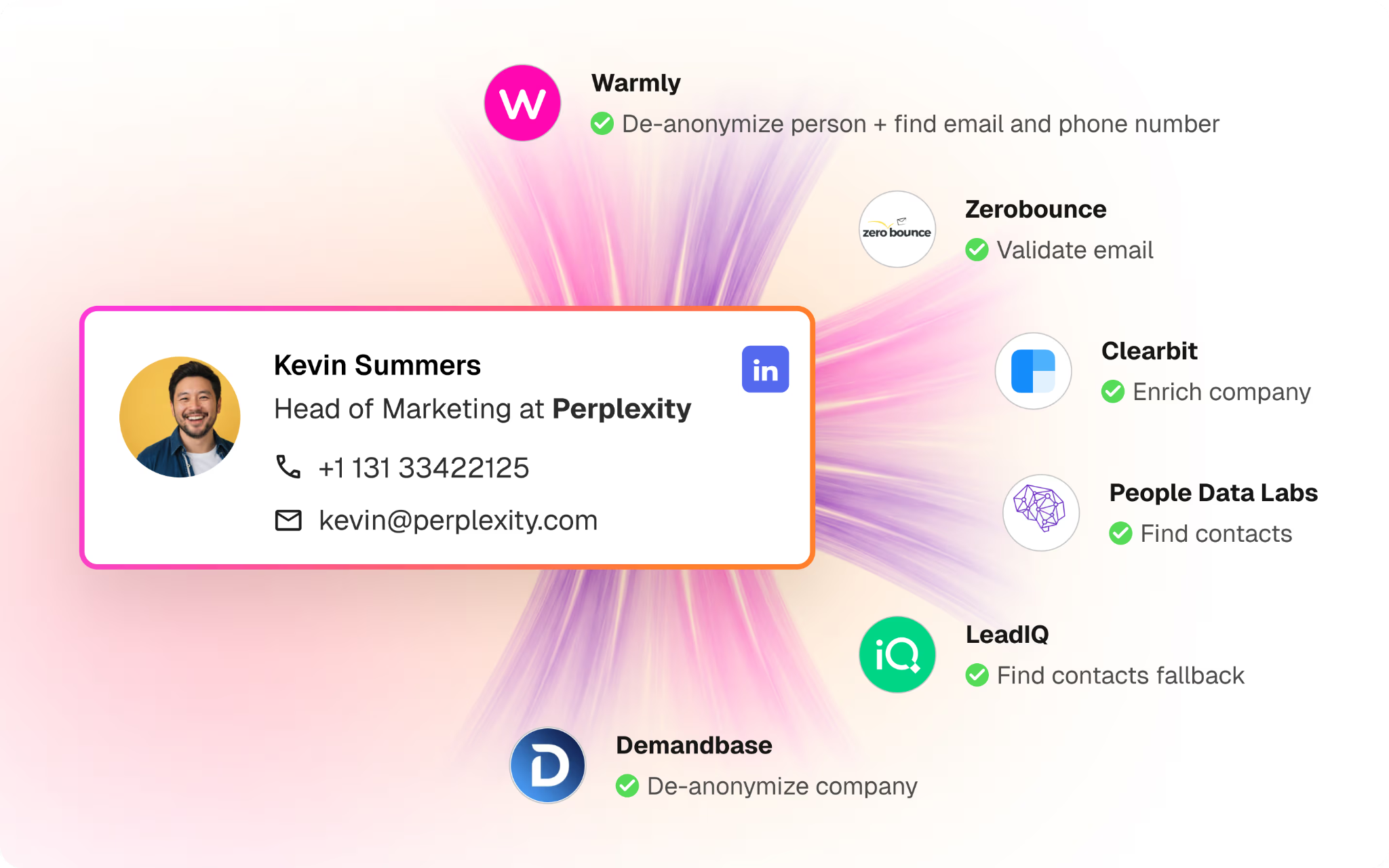

To build a full context on the account, you need a context graph layer that stores memory that is easily searchable and retrievable.

This problem around storing and retrieving full context is currently being solved and over time will be solved similarly to self-driving cars where the car will crash sometimes, but the threshold of safety will be better than the average human when driving it in a city where the AI has accumulated enough data on how to navigate the roads.

The AI will think about what it needs to know to solve the problem, retrieve the relevant data and memories, and in the process discover new data it needs to explore to solve the problem even better through ongoing exploration.

Throwing more compute through intelligent exploration is test-time scaling.

Rescursively calling the AI to spend more compute to conduct outside research, MCP into the CRM, website visits, or traverse the context graph, to make a better decision.

In GTM, the expensive mistake is not that an AI writes a bad sentence.

The expensive mistake is that it picks the wrong account, contacts the wrong person, uses the wrong context, misses the real buying signal, or automates a workflow that should have gone to a human.

Long thinking reduces these higher order context mistakes.

Context windows are getting larger, but larger context alone does not solve the problem.

The hard part is selecting the right context at the right moment.

Test-time scaling is how the system does that.

It can search, retrieve, compress, rank, and reason over context before deciding what matters.

So test-time scaling improves AI by letting the system retrieve relevant context instead of using all available context, reason through the account state, compare multiple possible actions, use tools to fill missing information, check whether the recommendation is safe, verify that the action matches business rules, and decide whether to act automatically or route to a human.

In the old world, software executed workflows humans had already defined.

In the new world, AI reasons through what workflow should happen in the first place.

Diagnose → retrieve context → plan → act → check → learn → repeat.

The better the model gets at this runtime reasoning loop, the better the decision making and action.

Synthetic data and experience data

At first, people thought AI scaled mainly through pre-training: bigger model, more human data, more compute.

Feed the model the internet, books, code, papers, videos, and structured knowledge, and it gets smarter by learning to predict what comes next.

Then the industry hit the obvious question:

What happens when we run out of high-quality human data? Ilya Sutskever basically said in 2024 we have basically consumed all high-quality public text data on the internet to train LLMs.

There was a panic around pre-training.

If the model has already consumed most of the useful internet, then maybe the original scaling law starts to slow down.

Maybe AI progress hits a wall. But that misunderstands what data is becoming.

That was training created by humans. But what we create is synthetic as well, it doesn’t naturally occur in nature. It also get repackaged continuously.

The next wave of data is starting to come from synthetic data that is generated to fill the gaps in existing human data.

The powerful version of synthetic data starts with some form of ground truth, then uses AI to expand it.

For example, you can start with a verified coding problem and solution.

Then an AI can generate thousands of variations of that problem, different edge cases, different frameworks, different bugs, different constraints, and different explanations.

Another system can run the code, check the tests, reject bad examples, and keep the good ones.

Now you have created far more high-quality training data than humans could have manually written.

The same pattern works across many domains.

In a GTM system this can be data produced by the revenue team, the world model at the time and the decision traces from the agents operating inside this dynamic environment, the actions and results are fed back into pre-training and post-training models to further refine future decision making. An agent can attempt a task, fail, retry, and preserve the successful path as training data.

Synthetic data is knowledge that has been compressed, structured, explained, and regenerated so another intelligence can learn from it.

AI can now do that at massive scale.

The key is verification. Bad synthetic data creates garbage. Verified synthetic data creates a flywheel.

The system can generate many possible examples, score them, filter them, and keep only the ones that are useful.

In code, the verifier is whether the tests pass.

In math, the verifier is whether the answer is correct.

In GTM, the verifier might be whether the action led to a reply, a meeting, pipeline, retention, expansion, or some other business outcome.

That means the bottleneck shifts.

The old bottleneck was:

Do we have enough human-created data?

The new bottleneck becomes:

Do we have enough compute to generate, evaluate, and train on high-quality synthetic and experiential data?

This is where post-training becomes more important.

Models can improve after pre-training by learning from feedback, examples, preferences, decision traces, tool traces, and real-world outcomes.

The model no longer only learns from static historical data.

It learns from experience.

Agentic systems make this even more powerful.

Once AI agents start doing real work, they create a new kind of data: experience data.

Every agent run can produce a trace of what the agent saw, what context it retrieved, what it reasoned through, what tool it used, what action it took, and what happened afterward.

Some of those traces will be bad and should be discarded.

Some will be mediocre and useful only as negative examples.

But some will be excellent.

Those successful traces can be turned back into training data.

So the loop becomes:

AI does work → the work creates traces → outcomes score the traces → the best traces become training data → the next model or agent gets better.

This is the deeper reason the scaling laws keep expanding.

Pre-training does not end because human data is finite.

It evolves into a larger system where synthetic data, feedback data, and agent-generated experience create new training material.

Then people thought inference would be cheap and easy.

But the opposite is happening.

Inference is where thinking happens.

If you want the model to solve a hard problem, you do not just ask it for a quick answer.

You let it think longer.

You let it search.

You let it reason.

You let it use tools.

You let it verify.

You give it more test-time compute.

Then agentic scaling takes this further.

Instead of making one model do everything, you create systems of agents.

One agent researches.

Another writes.

Another checks.

Another executes.

Another evaluates the result.

The AI system starts looking less like a chatbot and more like an organization.

That is the important shift.

AI is moving from a static model trained on historical data to a living problem-solving system that generates new data through its own work.

The next context window is not a window. It is a loop.

This is where the growing concept of recursive language models becomes important.

A recursive language model is not just a model with a bigger context window.

It is a model operating in a loop where its outputs become inputs for further reasoning, retrieval, memory, tool use, and action.

The old way of thinking about context was simple:

Put more tokens into the window.

The new way of thinking about context is different:

Let the model write code, search memory, call tools, spawn sub-agents, summarize findings, save notes, and retrieve only the context it needs at the moment it needs it.

That means the model is not storing all context inside itself.

It is learning how to find context.

It is learning how to write down what matters.

It is learning how to preserve state outside the context window.

It is learning how to call itself again with better information.

It is learning how to create a larger effective context window by using the world around it as memory.

This is a very important shift.

A one-million-token context window sounds big, but it is still a window.

And if you dump everything into it, most of it is irrelevant.

The better pattern is recursive.

The agent sees a problem.

It decides what it needs to know.

It writes a query.

It searches the context graph.

It retrieves the relevant memories.

It calls a tool.

It writes code to inspect a dataset.

It spawns a sub-agent to analyze one slice of the problem.

It saves the result.

It compresses what matters.

It updates memory.

Then it continues.

The context is no longer a static pile of tokens.

The context becomes a living system.

This is why recursive LLMs matter so much for enterprise AI.

They let the system compound.

Humans learn through experience, but human learning is slow to disseminate.

A human has to sleep.

A human has to take meetings.

A human has to read.

A human has to process.

A human has to care.

A human has to be willing to change how they work.

A human has to explain the lesson to another human, who also has to listen, understand, remember, and apply it.

That is a lot of friction.

Agents do not remove all friction, especially when governance and trust are required.

But once agents can perform a task reliably inside a well-governed process, the learning can propagate much faster.

One agent learns something.

The trace is saved.

The memory is updated.

The playbook improves.

The next agent starts from the improved playbook.

The system does not need to wait for an all-hands meeting, a training session, a manager one-on-one, or a human remembering to update a wiki.

The lesson can become part of the operating layer.

That is the real compounding loop:

Agent does work → work creates trace → trace becomes memory → memory improves future context → future agents perform better → more traces are created → the system compounds.

This is different from a human organization.

In a human organization, knowledge is fragmented across people.

One SDR learns an objection.

One AE learns a buying trigger.

One CSM learns a churn pattern.

One marketer learns a message that works.

Then the company has to mobilize that knowledge through meetings, enablement docs, Slack threads, training sessions, managers, and repetition.

The bottleneck is not just intelligence.

The bottleneck is dissemination.

Agents change that.

If the system has shared memory, shared governance, shared tools, and shared orchestration, then every agent can benefit from the learning of every other agent.

That is why the context graph matters.

It is not just a database.

It is the memory layer that lets the agent workforce improve together.

It is also why trust gates matter.

Because the faster learning disseminates, the more important governance becomes.

You do not want bad learning to compound.

You do not want wrong assumptions to propagate.

You do not want one hallucinated pattern to become a company-wide automation.

So the future is not just recursive agents.

It is recursive agents with governed memory.

That is the difference between AI sprawl and an AI operating system.

Agentic scaling, or AI multiplying itself

The context window for a single LLM these days is around one million tokens as of today for Opus 4.6 and GPT 5.5.

When trying to analyze what to do about an account, it is easy to blow through the context window if you take all one million website visits from the account in the past year and feed that into the window.

Most of it was irrelevant.

That is not enough.

You also need to research company news, how each member of the buying committee has interacted with you in the past, and preferences for how we handle these types of accounts.

AI agents themselves can scale too by multiplying themselves by spawning sub-agents when they are tasked with something.

One agent can research.

Another can write.

Another can check the work.

Another can use tools.

Another can execute.

Another can evaluate the result.

This is the move from one AI assistant to a team of AI workers.

These are things that were historically delegated to different teams.

RevOps helped compile the list of target accounts.

SDRs reached out to them.

Research teams compiled the account research.

A single AI can now kick off the job and spawn its own team to do that job.

This is why the future of work does not look like every person clicking through more apps.

It looks like humans defining problems, AI systems decomposing the problem, agents doing the work, and humans approving the high-context decisions that matter.

But the moment AI becomes a team of workers, the enterprise question changes.

The question is no longer only: can the AI do the work?

The question becomes: will the company let the AI act inside real systems?

The enterprise AI moat is permission

This is where the scarce asset in enterprise AI starts shifting from intelligence to permission.

For the last two years, the market competed on model quality.

Which model was smartest?

Which model was fastest?

Which model was most reliable?

But the models are now good enough that in most enterprise settings the harder question is no longer whether the model can help.

It is whether the company will let the model act inside real systems.

Permission here does not mean access controls in the abstract.

It means the right to write and merge code, touch production infrastructure, open and close tickets, change system configurations, message customers, approve workflows, and trigger downstream actions across enterprise tools.

Before that boundary, the model advises.

After it, the model operates.

That is the real threshold.

A chatbot gives suggestions.

An agent changes the state of the business.

Once AI starts changing the state of the business, trust becomes the control point.

Trust is what converts capability into permission.

And permission is what determines which company gets to move from answering questions to operating inside the enterprise.

That is why the governance race matters so much.

The surface-level competition looks like model versus model.

The deeper competition is over who becomes trusted enough to mediate action.

This is also where the old SaaS moat changes.

Legacy enterprise software won by storing records.

The next generation may win by becoming the company an enterprise trusts enough to let inside the workflows where important actions actually happen.

That is a different kind of power.

It is not just knowing what the business did after the fact.

It is being present at the moment the business decides, approves, edits, routes, and acts.

Every major platform has tried to close this loop in some form.

A company introduces a capability enterprises adopt at scale.

That capability creates new governance, security, and operational burdens.

The same company is then best positioned to sell the layer that manages those burdens because it has the deepest integration, the best telemetry, and the most complete view of how the system behaves in practice.

The more enterprises rely on the capability, the more they need governance.

The more they rely on the governance, the harder it is to replace the capability.

Each side of the relationship reinforces the other.

What makes AI different is that every prior loop was sequential.

This one compounds.

AWS did not get smarter the longer you ran workloads on it.

Microsoft’s identity system did not become more useful the more employees logged in.

Those products were valuable for what they did, not for what they learned about you.

Frontier AI is different.

The more it operates inside a specific organization, the more it understands how that organization actually works.

Switching eventually means rebuilding that context from scratch.

That lock-in dynamic is new.

And it is what makes the permissions question matter so much more than it might initially appear.

This connects directly to why context graphs matter.

If AI is going to operate, it needs memory.

It needs to know what happened before, what worked, what failed, who approved what, which accounts matter, which workflows are safe, which actions require a human, and what outcomes came from each decision.

That memory becomes more than data.

It becomes organizational know-how.

And the company that owns the trusted layer where that know-how compounds becomes very hard to replace.

So the next great moat in enterprise AI may not be intelligence alone.

It may be trust.

Trust plus context.

Trust plus governance.

Trust plus permission.

Trust plus the memory of how work actually gets done.

But there is a second problem.

Even if the model is smart enough and the enterprise is willing to grant permission, most companies still fail to make AI work.

And that is where the next bottleneck appears.

The model is not the bottleneck. The operating layer is.

If AI is so great, why is it not working?

Across enterprise AI deployments, the pattern is becoming obvious.

Companies spend millions trying to bring AI into their business, whether in the form of new software licenses that promise to take work off their plate, or straight to the model providers in token spend.

Leadership buzzes non-stop about going AI-first.

Yet when asked point-blank what has changed in the day-to-day, the answer is some version of nothing.

The AP team is still doing AP the same way.

Month-end close is still 22 days.

Reps are still hitting quota at 24%.

The CRM still has the same 30% data decay that it had in 2022.

The models are good enough.

Stop blaming the models.

Every model generation, the enterprise failure rate is identical.

Despite models improving, failure rates have not come down.

It turns out the model is not the bottleneck.

The bottleneck is the operating layer underneath the model.

AI is working for one group of people right now, at scale, because it is the group of people that relies the least on business logic.

Software engineers.

Engineering work has four properties that basically no other enterprise function has.

It is bounded.

A function takes inputs and returns outputs.

The scope of “fix this bug” lives inside a file or a module.

The dependencies are explicit and importable.

It is checkable.

Compilers tell you in milliseconds whether the code parses.

Tests tell you whether it works.

Type systems catch entire classes of error before runtime.

Feedback loop: seconds.

The substrate is structured.

Code lives in files, in version control, with a deterministic build pipeline underneath.

Same input, same output.

You can replay any state.

The output is verifiable.

A pull request is a discrete artifact.

A reviewer can look at the diff in 10 minutes and say yes or no.

When you point a capable AI at work that is bounded, checkable, structured, and verifiable, the leverage is enormous.

Cursor and Claude Code are the proof.

But contrast software engineering with a finance close.

Finance involves AP, AR, intercompany reconciliations, FX, accruals, journal entries, and exception handling that spans NetSuite, Concur, three banks, two ERPs from acquisitions, a custom intake form, and a Slack channel where the controller flags weird stuff she sees.

The process is documented in an SOP that does not match what actually happens.

The output is “the close was clean,” which takes two senior accountants two days to verify.

Sales ops involves a CRM, an outbound tool, a calendar, a notes platform, an enrichment vendor, an attribution tool, and a Slack channel where the AE is asking the CRO whether to discount this deal.

None of those systems share state cleanly.

The process for qualifying a lead is different across reps, even on the same team.

This is what every ops function looks like in every company.

None of it is bounded, checkable, structured, or verifiable the way code is.

Trying to wrangle generic AI to these functions that are incredibly specific to your company and its processes is a fool’s errand.

Pointing an LLM at this work gives you negative ROI.

The operator was doing the work in 30 minutes.

Now they are doing the work in 30 minutes plus another 30 minutes correcting the AI’s mistakes.

This is why most AI pilots fail.

They skip the audit.

They start building before they understand the workflow they are supposedly automating.

Every company is so unique that simply duplicating an agent that worked for one company onto another is not going to work.

The actual workflow always includes things the SOP does not mention.

The “I always check this spreadsheet first” step.

The “I email Sarah directly because the system notification does not work” step.

The 17 exception types the team handles every month.

The unwritten rule that anything over $5M loops in the controller, even though the threshold says $10M.

When you build for the documented process, you automate 70% of the volume and break on 30%.

The 30% that breaks creates more work for the team than they had before, because now they have to fix the AI’s mistakes on top of doing the work.

The audit is the part where you sit with the people doing the work, watch them do it, and map what actually happens.

They also throw everything at the LLM and expect it to work.

LLMs are seductive.

Once you have one, every problem looks LLM-shaped.

Need to extract a value from a document?

Ask the model.

Compare two values?

Ask the model.

Route a result based on a number?

Ask the model.

The team builds an architecture that is 90% LLM calls and 10% code.

The system is slow and expensive, while simultaneously hallucinating in ways that are fine for a chat interface and unacceptable for production workflows.

Production systems that actually work look almost boring.

They are mostly code with a few model calls where judgment is actually required.

The LLM goes where judgment lives.

The rest is database queries, comparisons, deterministic logic, and branch routing.

This is the lesson.

AI does not remove the need for systems design.

It increases the importance of systems design.

Then comes agent sprawl.

Every individual employee with AI access turns into their own agent factory.

Sarah in AP builds her own agent to classify invoices.

The controller spins up a separate one to reconcile intercompany transfers.

The FP&A lead vibe-codes a workflow to pull variance reports.

The CRO’s chief of staff has a personal agent that summarizes Salesforce notes before every QBR.

The marketing manager built a content agent.

The recruiting coordinator built a candidate-screening agent.

Multiply that across a 200-person operations org and you end up with 50 to 100 separate AI workflows running across the business.

Each one has its own ingestion pipeline, approval logic, logging, model config, and prompts.

There is no common agent spine.

No shared memory.

No shared knowledge of how the company actually runs.

Marketing’s content agent has zero awareness that customer support is currently dealing with 50 tickets about the exact thing it is writing copy about.

Finance’s invoice agent has no idea that procurement just blacklisted that vendor last week.

The fix has to be architectural and it has to be planned from day one.

You need a single orchestration layer that sits on top of the existing software stack, with shared infrastructure for ingestion, approvals, audit logging, model routing, and knowledge.

Every new use case from any person or any process lands as configuration on top of that single platform.

No more bespoke vibe-coded side projects that nobody else in the company even knows exist.

Once you have the platform, the economics compound dramatically.

The first agent on the platform takes 12 weeks.

The next takes 9.

The third takes 4.

Without the platform, every single agent costs roughly the same to build, and the integration debt eventually consumes the entire AI budget.

This is also why companies fail when they treat AI as a side project instead of infrastructure.

Most companies budget AI initiatives like traditional software:

Plan.

Build.

Ship.

Declare victory.

Move on.

That logic works for traditional software because once you build it, it stays built.

AI is the opposite.

Every quarter, something underneath you shifts.

A new model is dramatically better at your specific workload.

Or worse, the model you depended on quietly degrades.

Pricing changes.

Rate limits change.

Capabilities change.

Your workflow changes.

Your business changes.

The deployments that actually pay off treat AI as continuously evolving infrastructure with a dedicated team that owns ongoing optimization.

They monitor quality, swap models when better ones ship, retire agents that have stopped earning their keep, and keep tuning.

This is the practical version of the same loop:

Audit → decompose → orchestrate → route models → monitor → tune → retire → improve.

The models got smart chapter is over.

The next decade belongs to the companies that build the operational layer underneath the models, as opposed to the ones who spend another five years pouring frontier AI onto a mess of systems and wondering why nothing has actually changed.

This points to the real future:

The winning AI companies will not just have the smartest model.

They will have the trusted operating layer where AI can understand the business, take action, follow governance, learn from outcomes, and compound organizational memory over time.

That is the future Warmly is building toward in GTM.

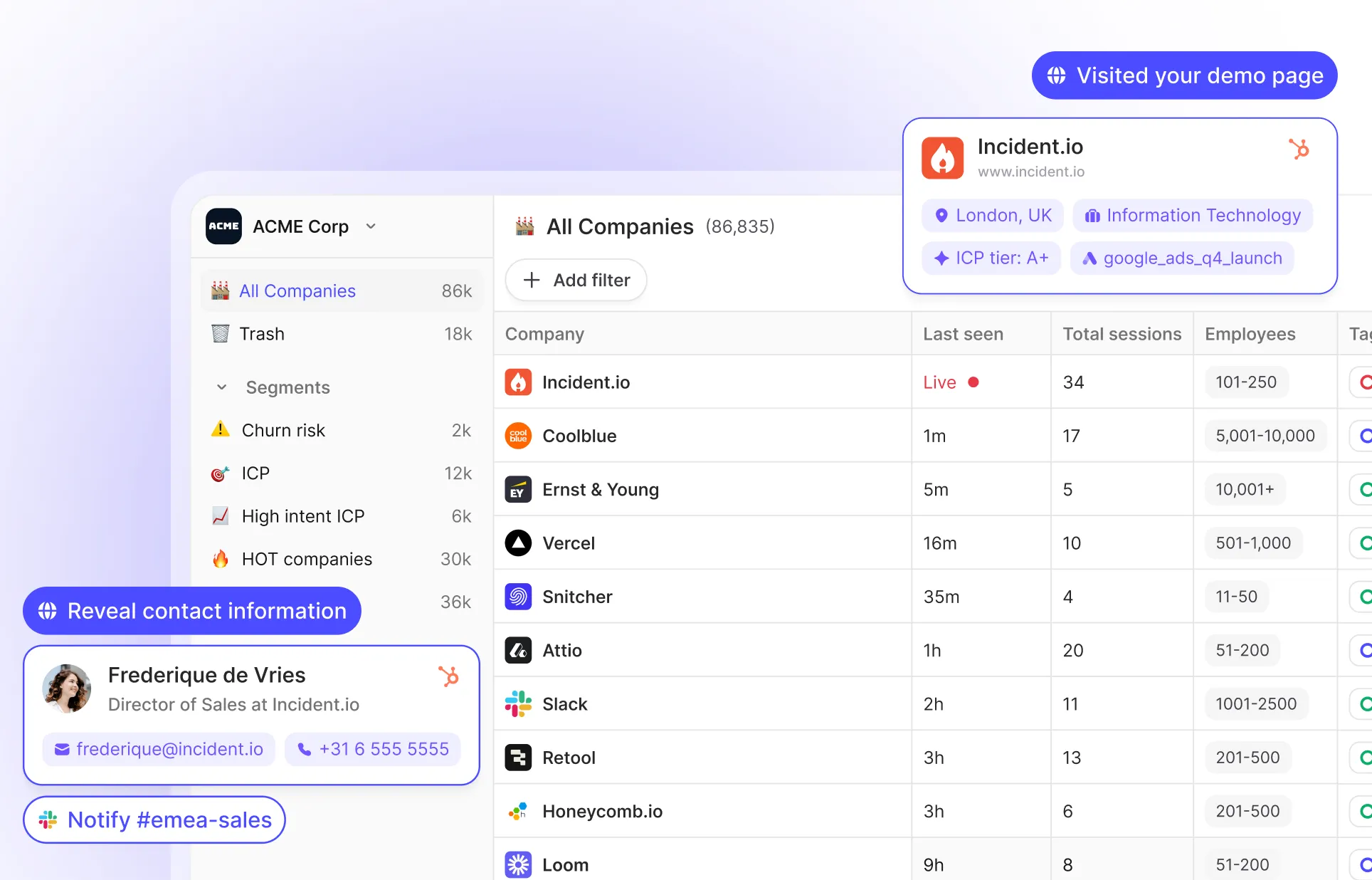

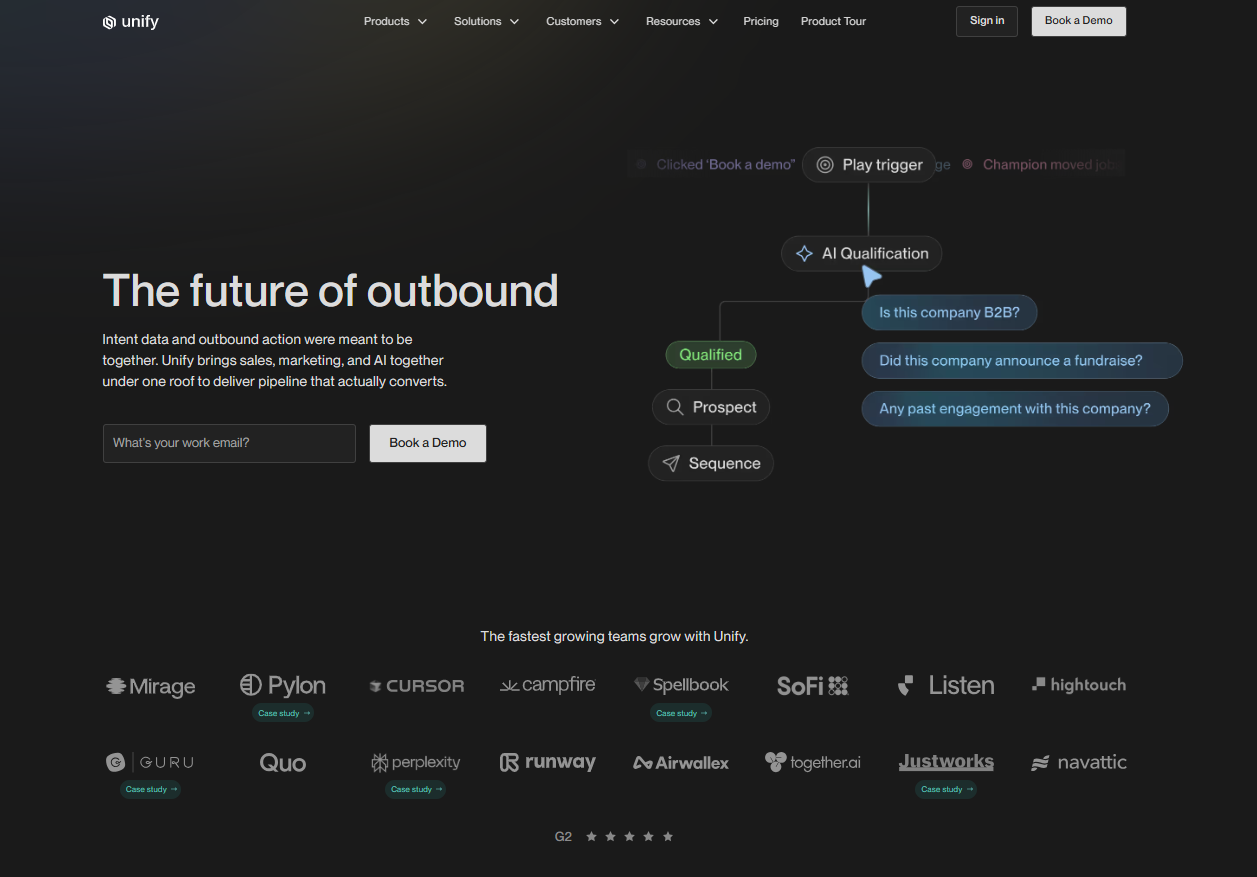

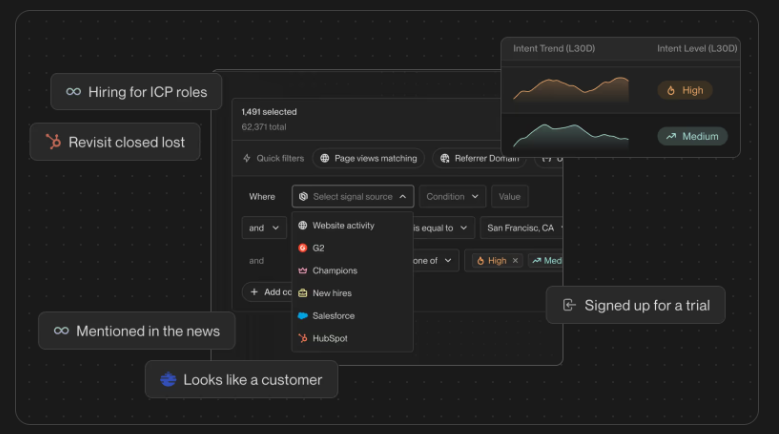

The platform shift happens when signal turns into action

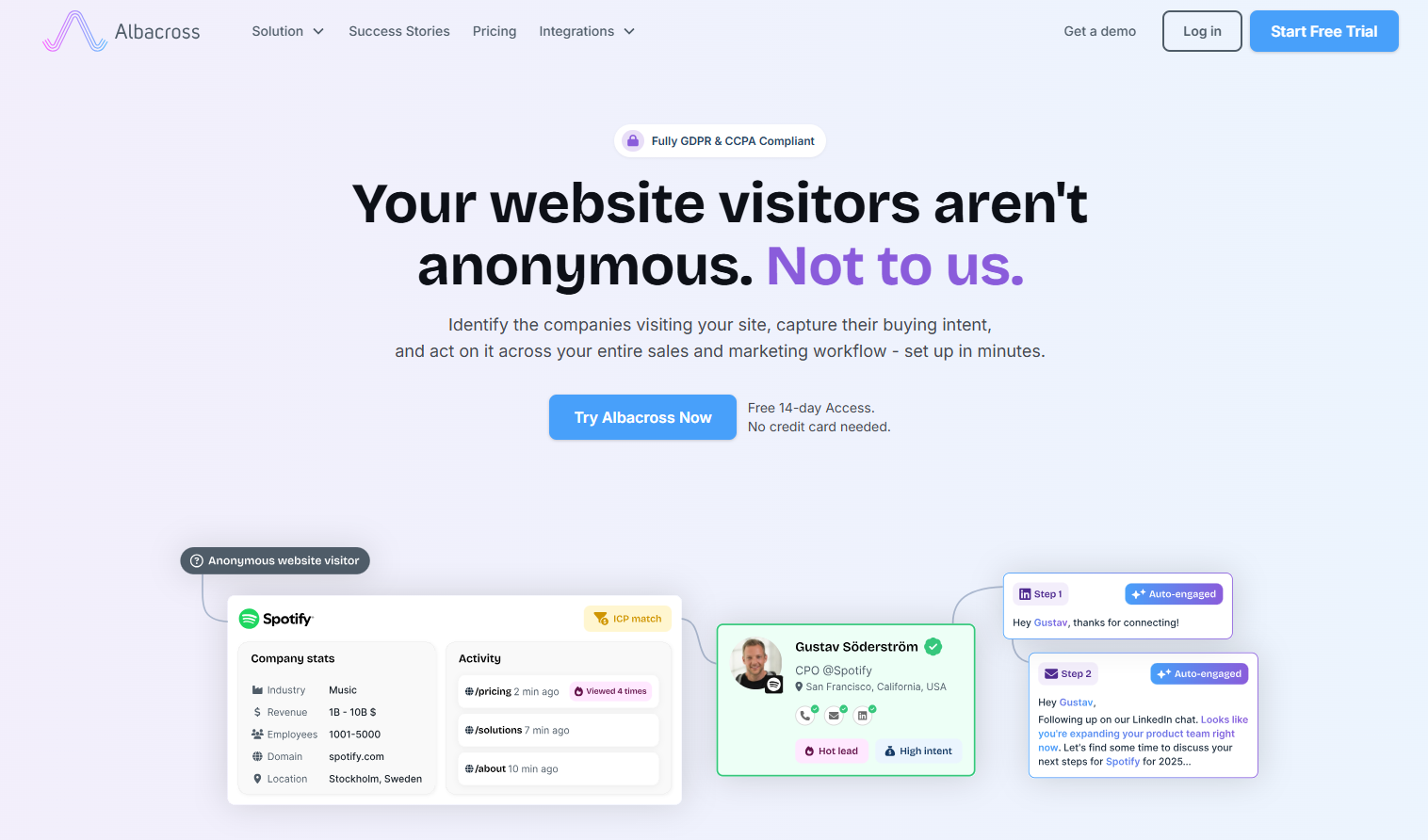

In the old world, software mostly stored signals.

A website visit was a signal.

An email open was a signal.

An ad click was a signal.

A form fill was a signal.

A CRM note was a signal.

A sales call was a signal.

Product usage was a signal.

But a human still had to interpret the signal and decide what to do.

That is why the stack fragmented.

One tool captured the website visit.

Another enriched the account.

Another scored the lead.

Another routed it.

Another sequenced it.

Another booked the meeting.

Another tracked the opportunity.

Another reported attribution.

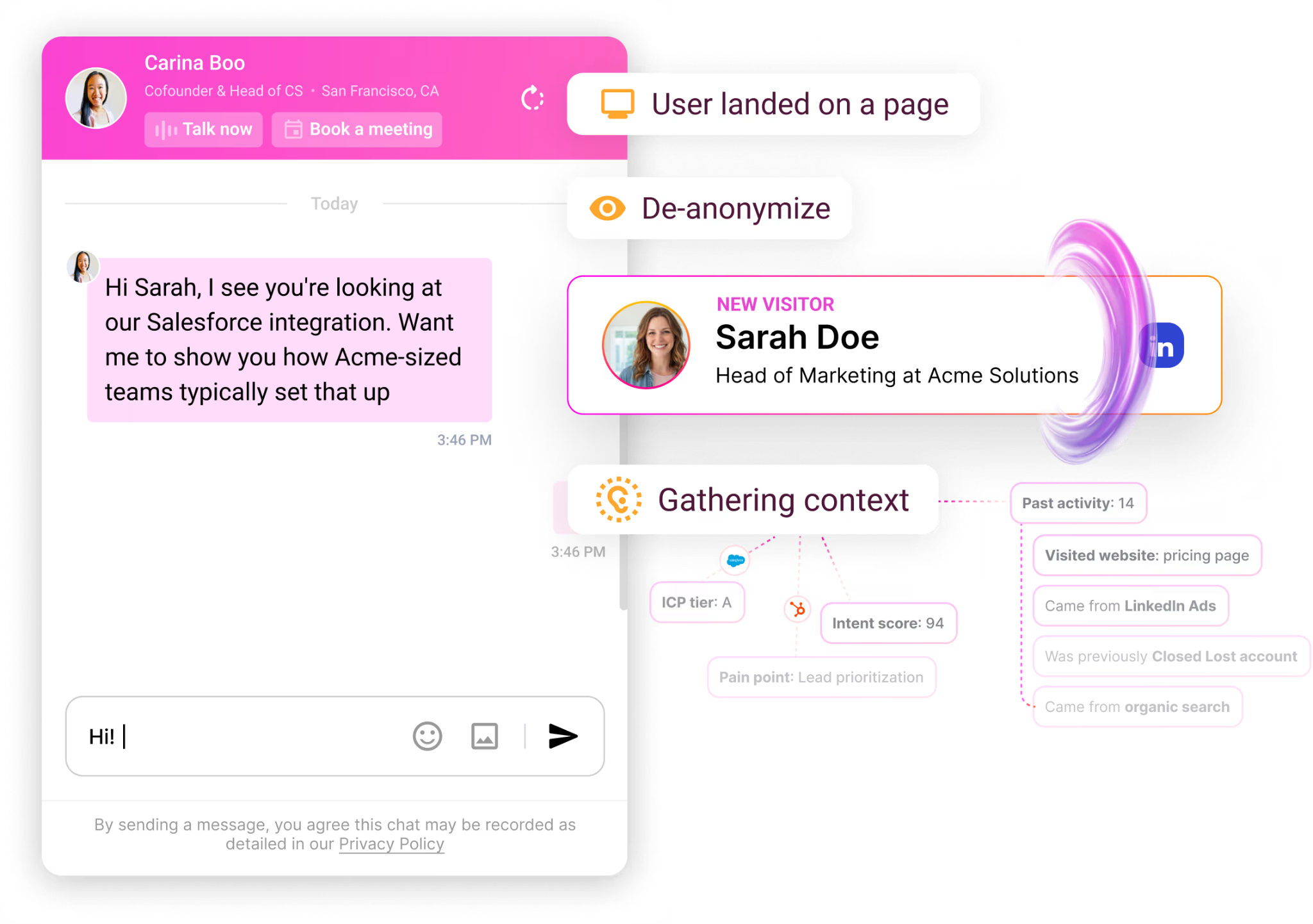

AI collapses that chain because the system can move from signal to decision to action.

That is the moment marketing automation becomes revenue orchestration.

The platform that owns the signal layer does not stop at reporting what happened.

It starts deciding what should happen next.

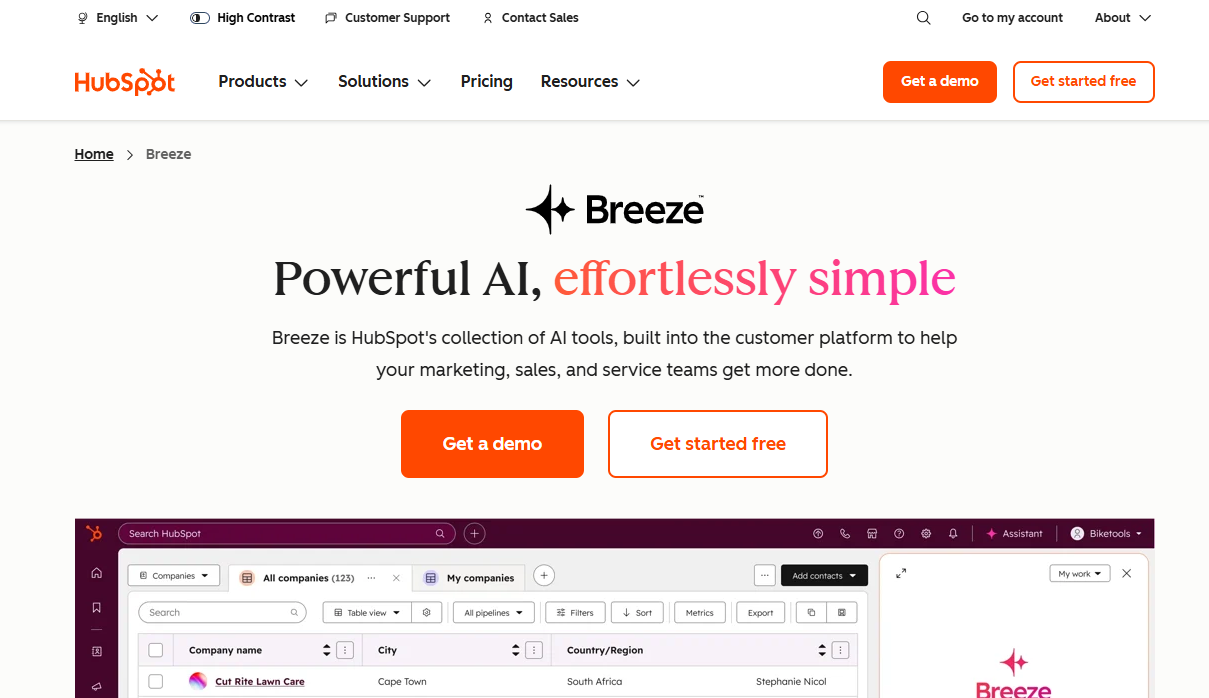

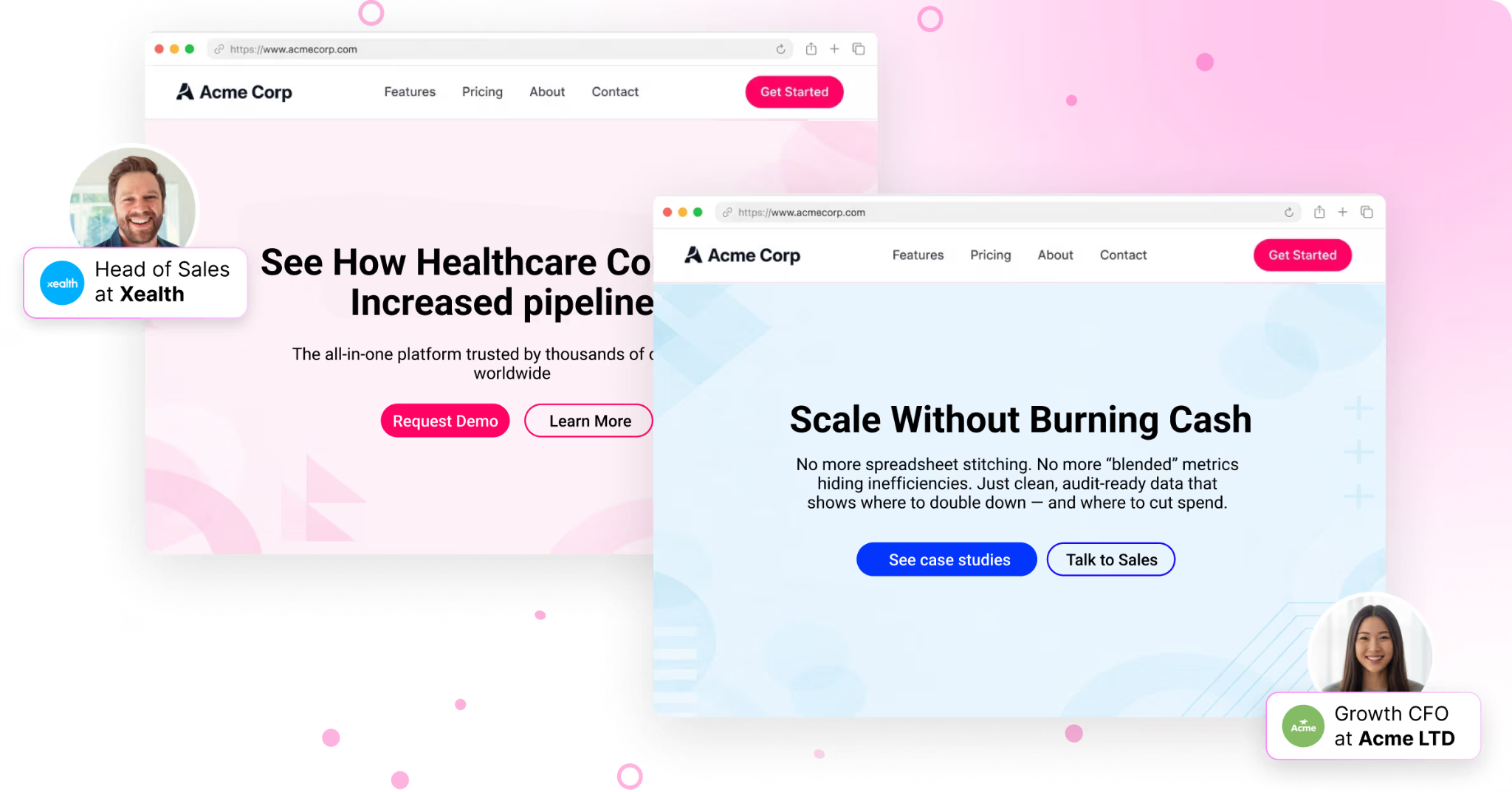

Sales and marketing collapse into one agentic revenue system

If all of this is true, then one inevitable conclusion is that sales and marketing will collapse around agents where agents can do work.

Not because sales stops mattering.

Not because marketing stops mattering.

But because the old separation between sales and marketing was a separation created by human bottlenecks.

Marketing was scaled persuasion.

Sales was human persuasion.

Marketing created demand at scale.

Sales converted demand one conversation at a time.

That made sense when every step required a human to read, research, write, route, follow up, personalize, qualify, demo, negotiate, and remember what worked.

But AI changes the cost structure of action.

Agents can research accounts.

Agents can write messaging.

Agents can qualify inbound.

Agents can route accounts.

Agents can recommend next best actions.

Agents can trigger follow-up.

Agents can personalize landing pages.

Agents can give demos for lower-ACV products.

Agents can send credit card links.

Agents can monitor intent.

Agents can summarize sales calls.

Agents can turn those calls into training data.

Agents can learn which messages, offers, channels, and buyer journeys convert.

So the revenue org starts to look less like a set of departments and more like a learning system.

Marketing sits at the top of that system because marketing owns the largest surface area of demand.

Marketing sees the earliest signals.

Website visits.

Ad engagement.

Email engagement.

Content engagement.

Intent data.

Anonymous traffic.

Return visits.

ICP fit.

Buying committee movement.

Messaging conversion.

Creative conversion.

Offer conversion.

The further up the funnel you go, the more data you have.

That makes marketing incredibly important in an agentic revenue system.

Marketing will not just run campaigns.

Marketing will govern the agent fleet that turns market signals into pipeline.

Marketing will be in charge of gathering as much pipeline as possible, leveraging agents that learn inside their organization and use experiential learning to perform better.

Marketing will own the reinforcement loops around messaging, creative, intent, conversion, routing, nurture, and pipeline creation.

And because those loops can be tied to closed-won revenue, marketing becomes the function that teaches the system what actually converts people to buy.

This is why marketing becomes more strategic, not less.

The creativity of humans redirects the AI agent fleet.

That creativity has to come from deep domain expertise.

Who do we sell to?

What do they care about?

What pain is becoming urgent?

What market shift creates a new wedge?

What offer makes the buyer move now?

What message feels alive instead of generic AI slop?

What buying experience would make this feel like a layup for sales?

That is marketing in the agentic world.

It is not just brand.

It is not just demand gen.

It is not just lifecycle.

It is the operating system for scaled revenue learning.

Sales also changes.

Salespeople will not necessarily be traditional salespeople.

The best ones will look more like consultative FDEs for revenue outcomes.

They will help enterprises deploy the system, build trust, navigate internal politics, connect the software to the customer’s real operating model, and make sure the customer actually achieves results.

In the old world, a salesperson could sell software and leave the hard work of value realization to onboarding, services, or the customer.

In the new world, that will not be enough.

Future buyers do not want more vendor lock-in.

They are building their own AI systems internally.

They need those systems to generalize across their organization.

The force is too strong to ignore.

Every company is going to try to build its own internal AI operating system because every company wants its own agents, its own memory, its own workflows, its own governance, and its own compounding learning loop.

That means vendors cannot just sell vaporware into an enterprise sales motion.

They have to deliver outcomes.

They have to build trust through relationships and deployments.

They have to help the buyer move from buying software to building an agentic operating model.

This is why the future enterprise sales motion looks less like pitching features and more like field deployment.

The salesperson becomes part consultant, part strategist, part implementation partner, part trust builder, part systems thinker.

They need to understand the customer’s business deeply enough to help them rewire how work gets done.

This is where the revenue leader changes too.

The future revenue leader is deeply domain-specific, but also able to harness the power of agents.

They know the customer.

They know the market.

They know the product.

They know the sales motion.

They know the constraints of the organization.

And they know how to direct the agent fleet toward outcomes.

Their job is not to manage sales and marketing as separate functions.

Their job is to operate a revenue learning system.

That system powers every individual through the collective learning of every sales call, website visit, email reply, ad conversion, creative test, demo, objection, and closed-won deal.

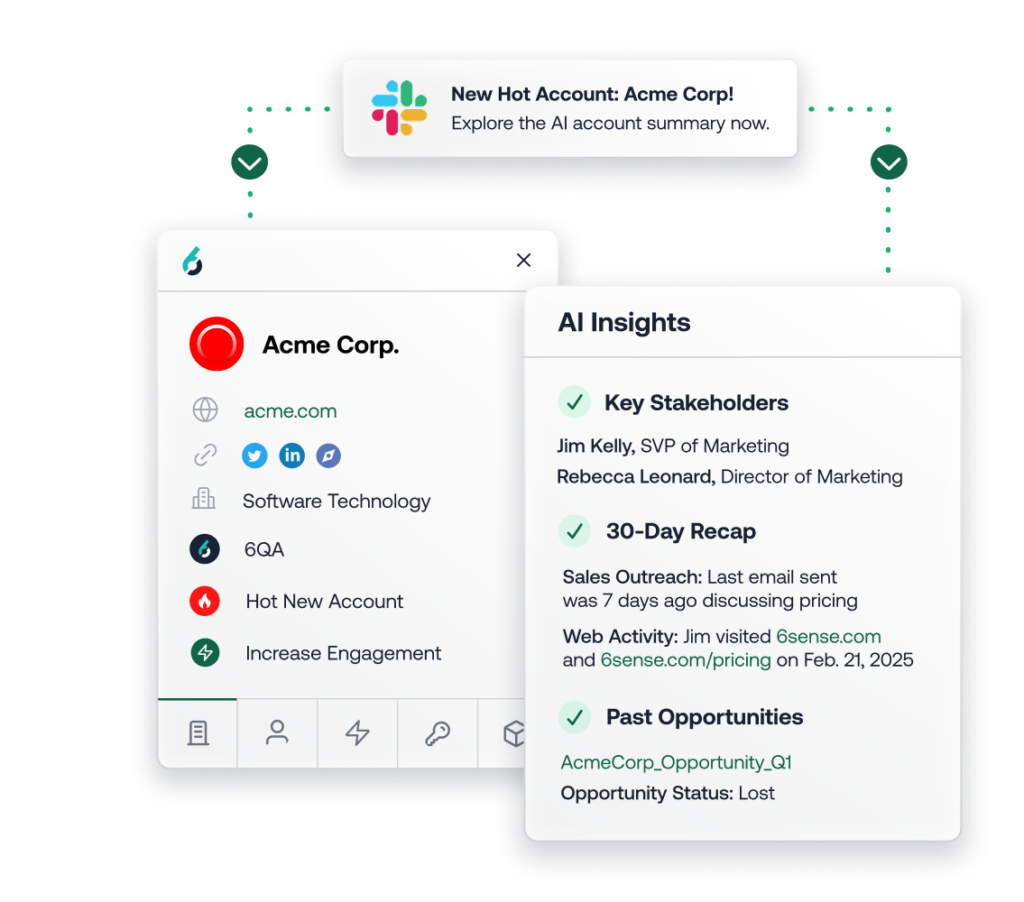

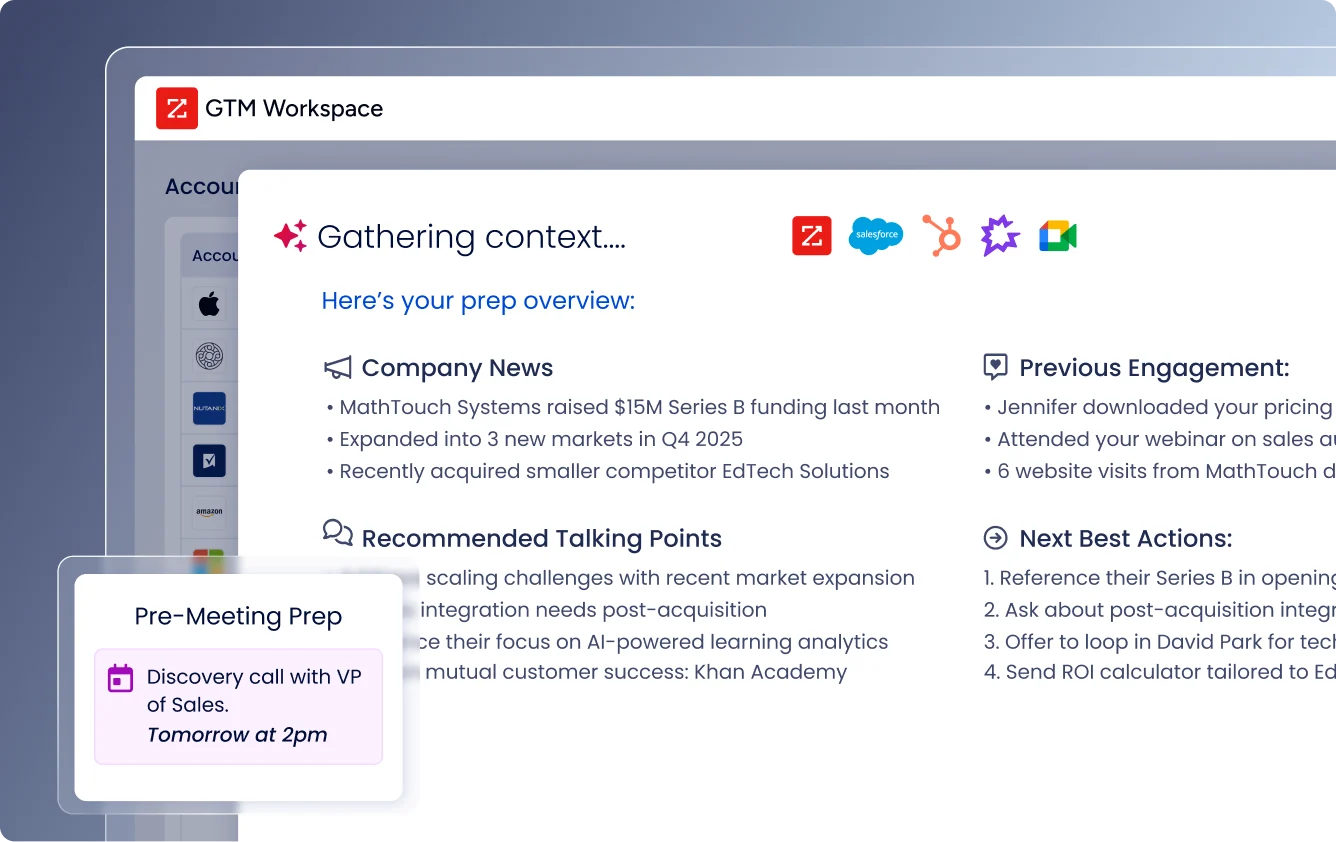

Every sales person will have their own Jarvis.

A copilot that gives them an edge on every deal.

What does this account care about?

Who is really in the buying committee?

What changed since the last touch?

What objections came up last time?

What similar companies converted?

What message should we use?

What should we not say?

What is the next best action?

What is the risk in this deal?

What internal champion needs help?

What executive relationship matters?

But that Jarvis is not separate from marketing.

It is powered by the same hive-minded brain that marketing uses to understand the market, generate pipeline, test messaging, learn from conversion, and build the buying experience.

Marketing sets the conditions that make sales easier.

The research.

The offer.

The compelling message.

The account context.

The intent signal.

The personalized experience.

The routed meeting.

The right follow-up.

The story that makes the buyer care.

The goal is to make the sales conversation feel like a layup.

That does not eliminate sales.

It elevates sales into the moments where human trust, judgment, creativity, and negotiation still matter most.

Enterprise sales is exactly where humans remain most important because the environment is not fully observable or repeatable.

The deal is political.

The buyer is emotional.

The timing is uncertain.

The internal dynamics are hidden.

The value case is specific.

The trust is human.

The reinforcement loop is weak.

But even enterprise sellers will use the hive-minded brain.

They will try things.

Those attempts will create data.

The system will observe what happened.

The best traces will become better training data.

And the next seller will start from a better version of the system.

This is the collapse.

Sales and marketing do not disappear.

They converge into an agentic revenue system where marketing owns the signal layer, agents execute the scalable work, sales handles the highest-trust moments, and the entire system learns from every outcome.

That is the answer to the ActiveCampaign question.

Warmly is not “too salesy” for a marketing platform.

Warmly is what a marketing platform becomes when AI collapses the boundary between signal, decision, and action.

Why marketing becomes the operating system for revenue

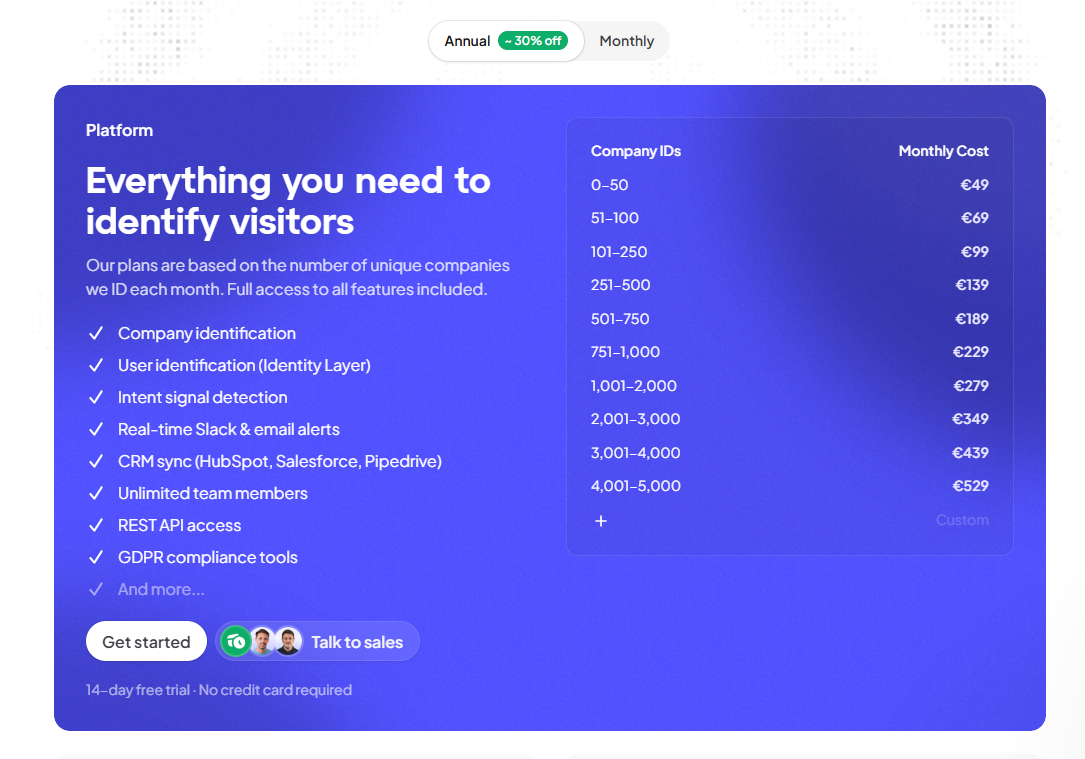

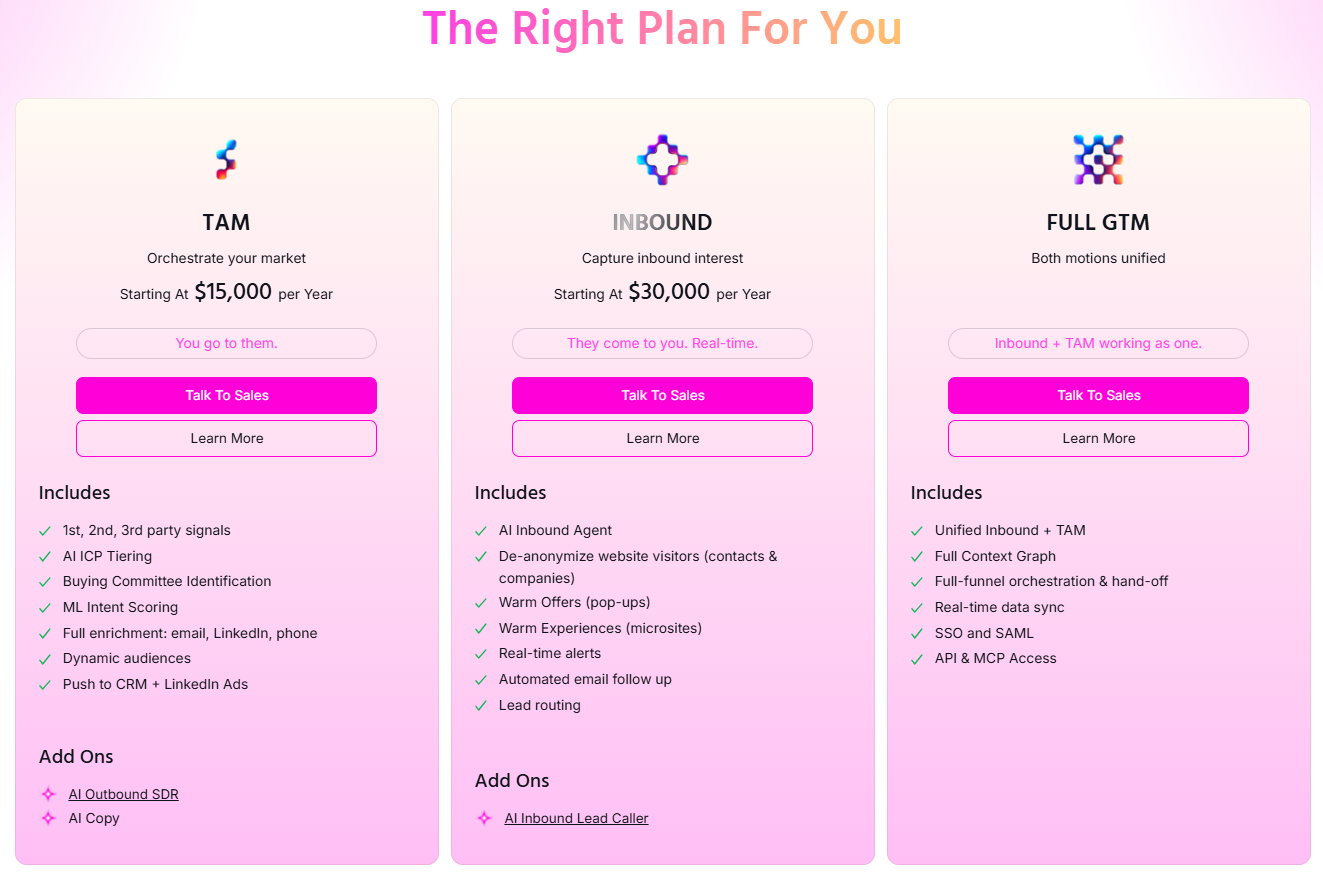

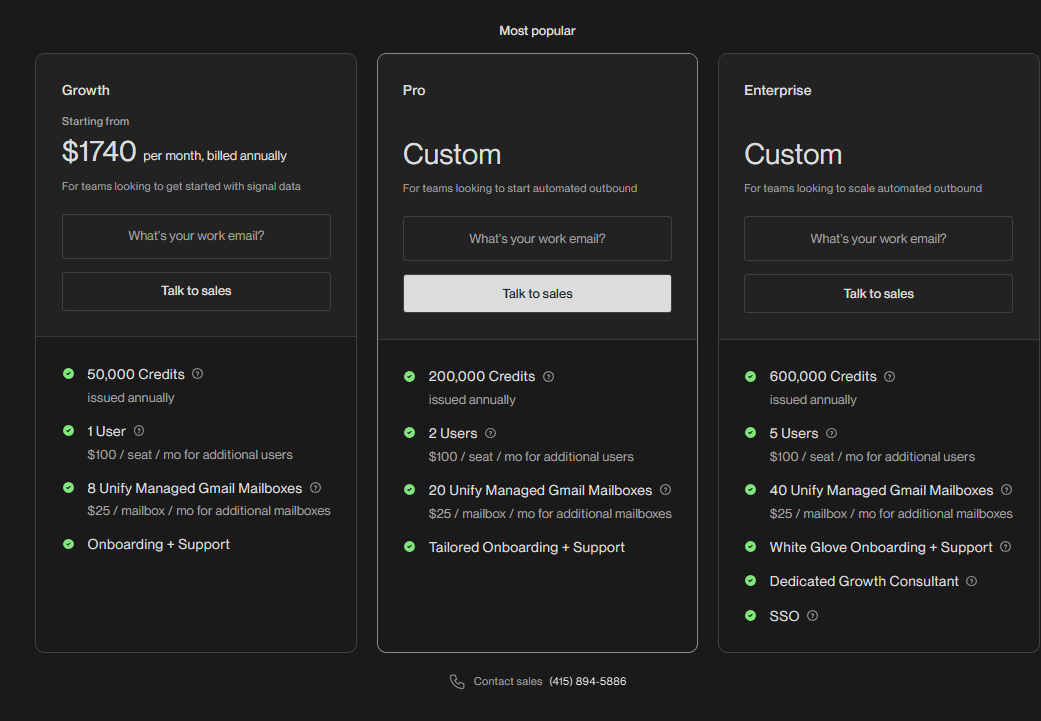

This is Warmly’s domain specifically.

We are building for the world where revenue is no longer managed through separate systems of record, separate point solutions, and separate human teams trying to coordinate around fragmented context.

We are building for the world where revenue becomes a learning system.

That is the inevitable conclusion of everything above.

If AI turns work into agent loops, and those agent loops create traces, and those traces become memory, and that memory improves the next action, then the highest-value system in GTM is the one that can unify the learning across the entire revenue motion.

Not a sales tool.

Not a marketing tool.

A GTM learning system.

This is why the next great GTM platform will not be judged by whether it fits cleanly into today’s sales or marketing budget.

It will be judged by whether it owns the learning loop that turns demand into revenue.

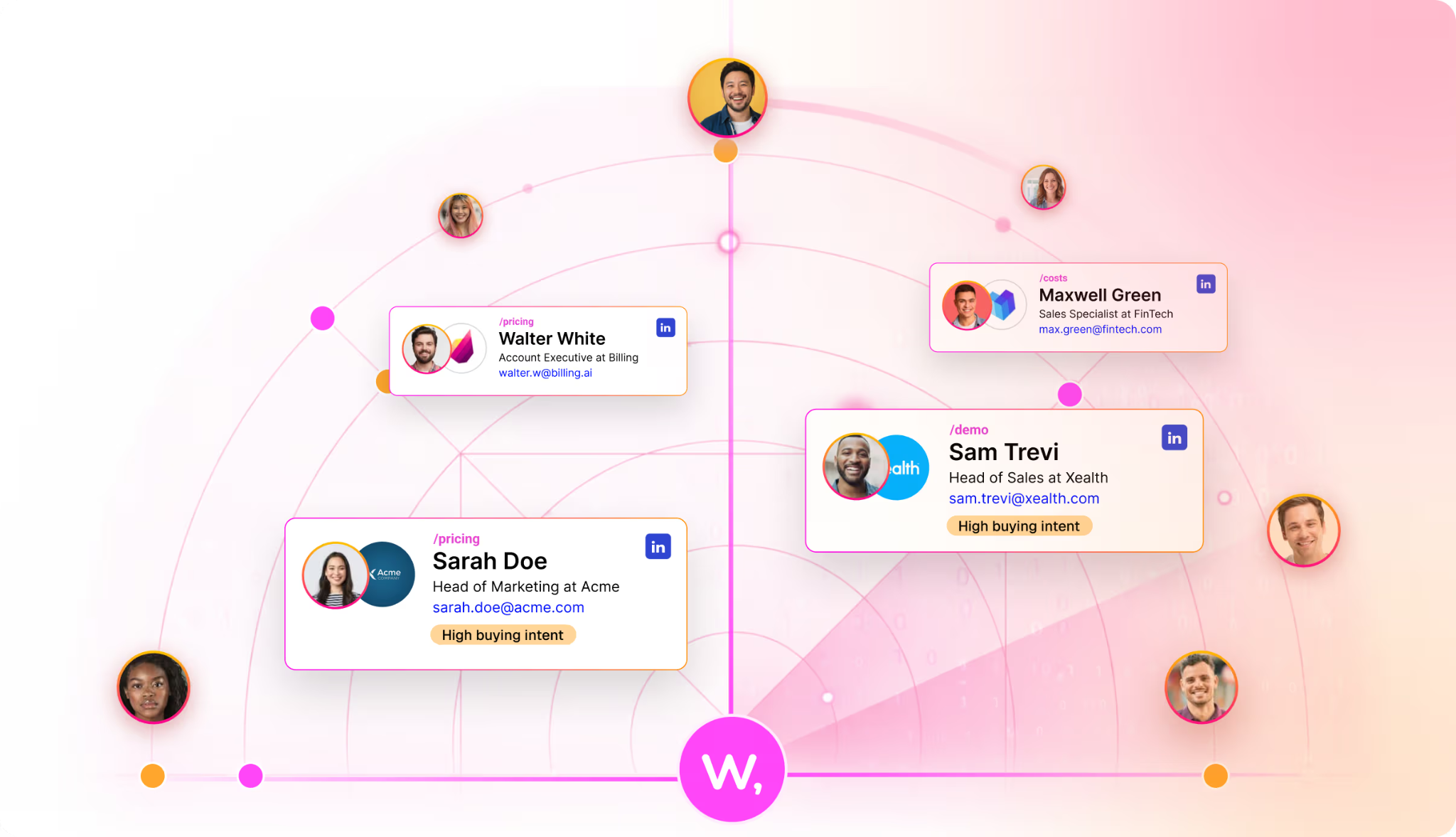

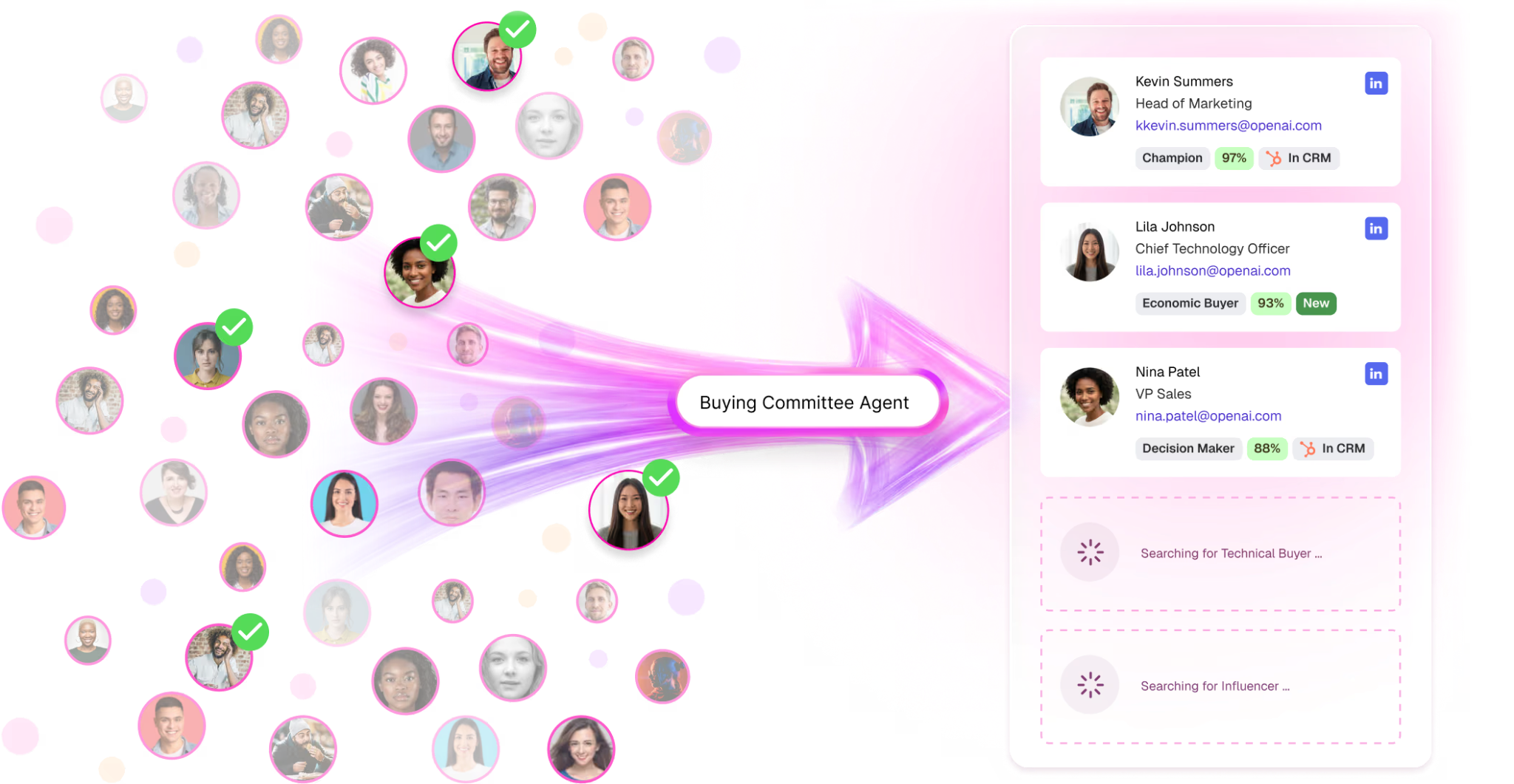

Can it identify the buyer?

Can it understand the account?

Can it interpret intent?

Can it personalize the experience?

Can it route the right moment to the right human?

Can it automate the work that is safe to automate?

Can it learn from what happened?

Can it make the next campaign, the next email, the next sales call, and the next buyer journey smarter?

That is the category.

Not sales automation.

Not marketing automation.

Revenue learning.

The question is no longer whether a capability belongs to sales or marketing.

The question is whether it improves the revenue learning loop.

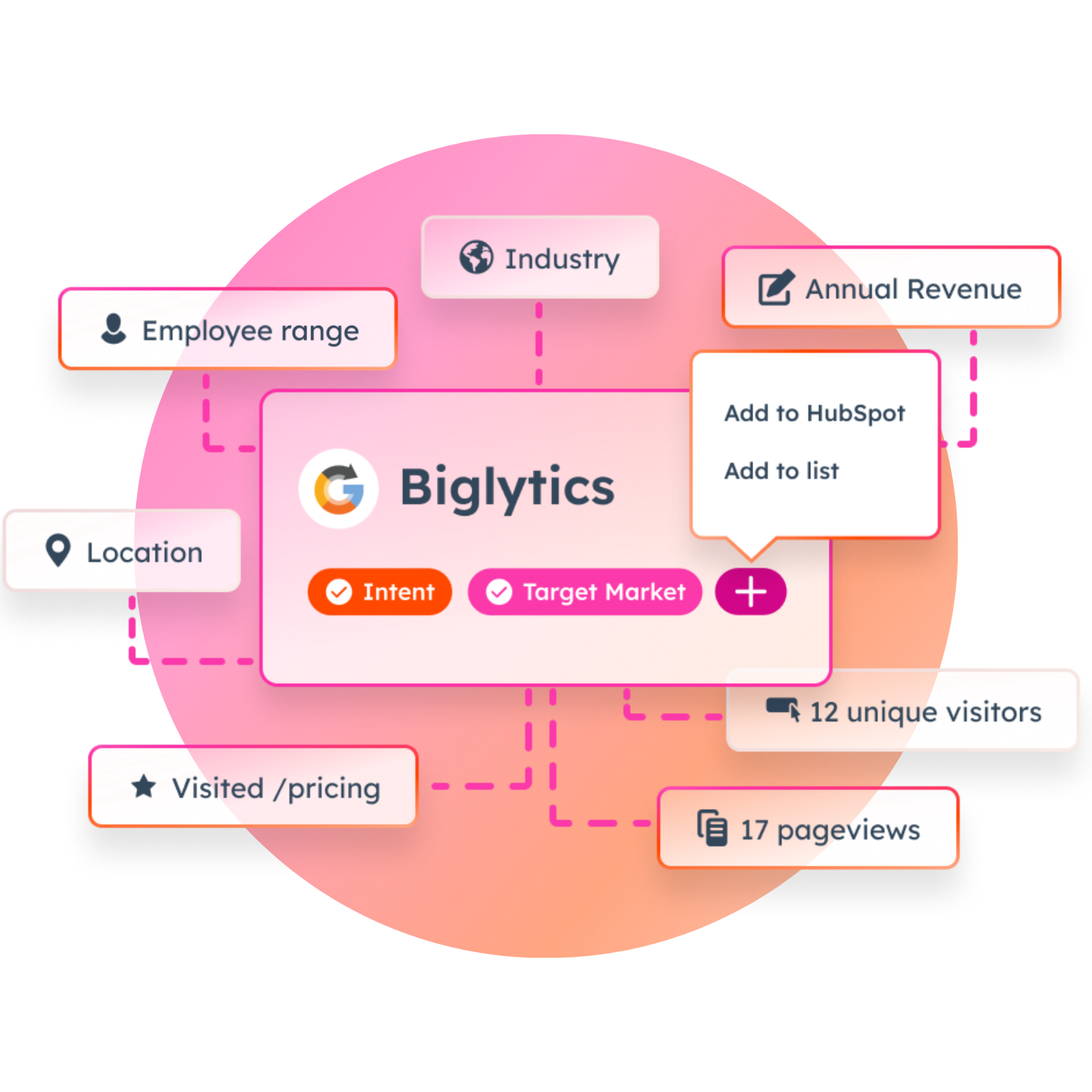

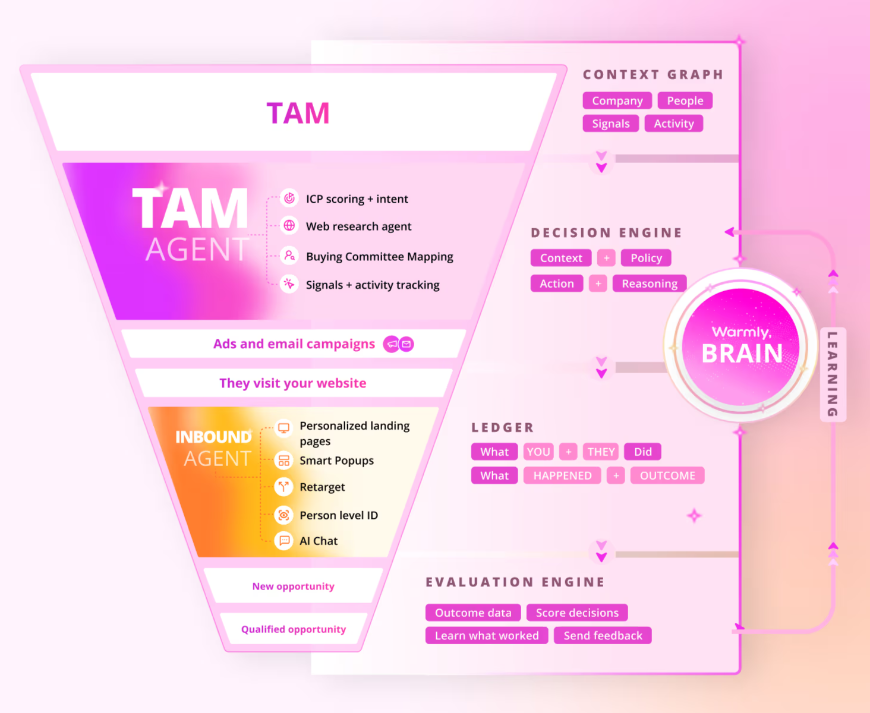

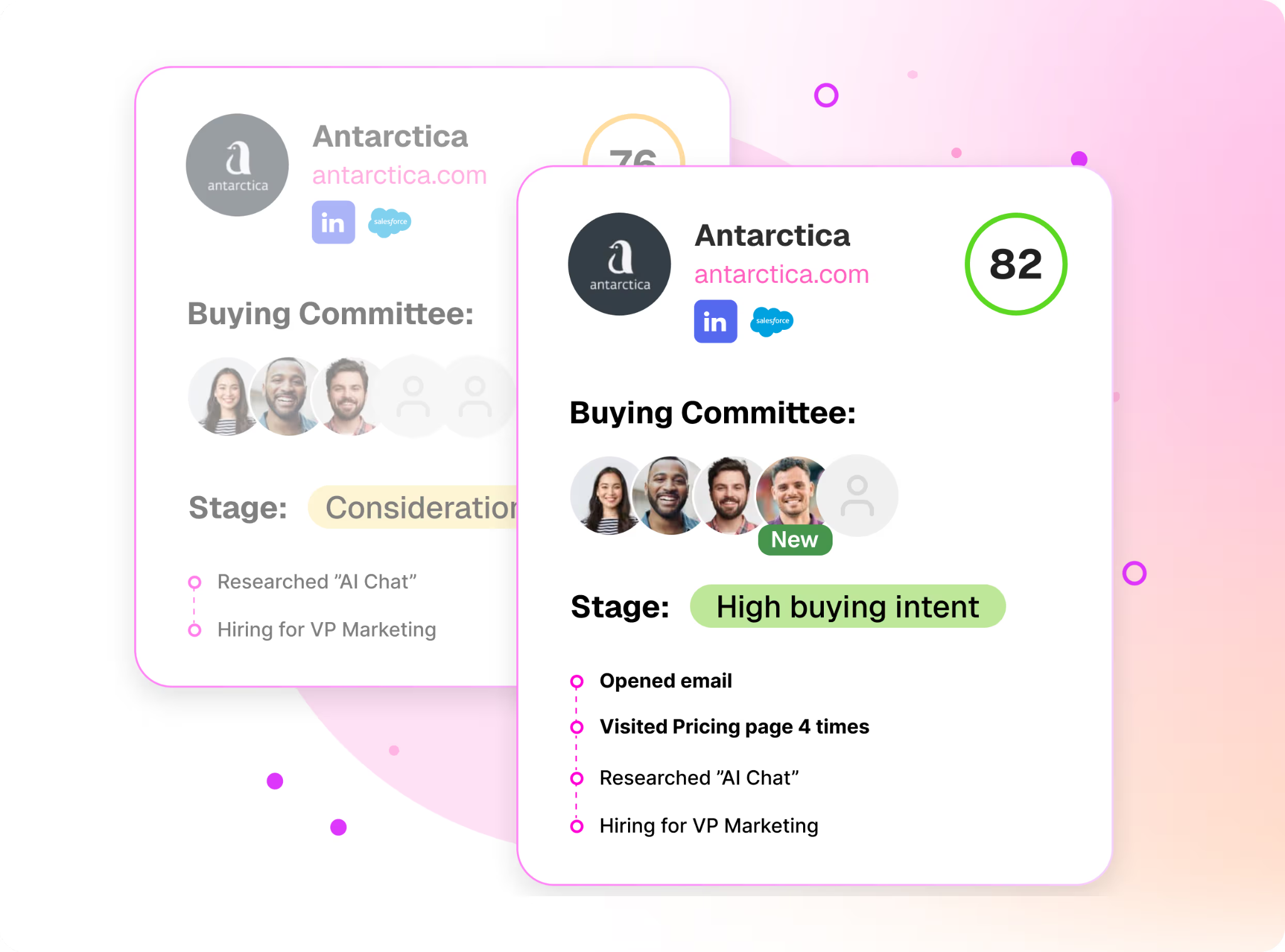

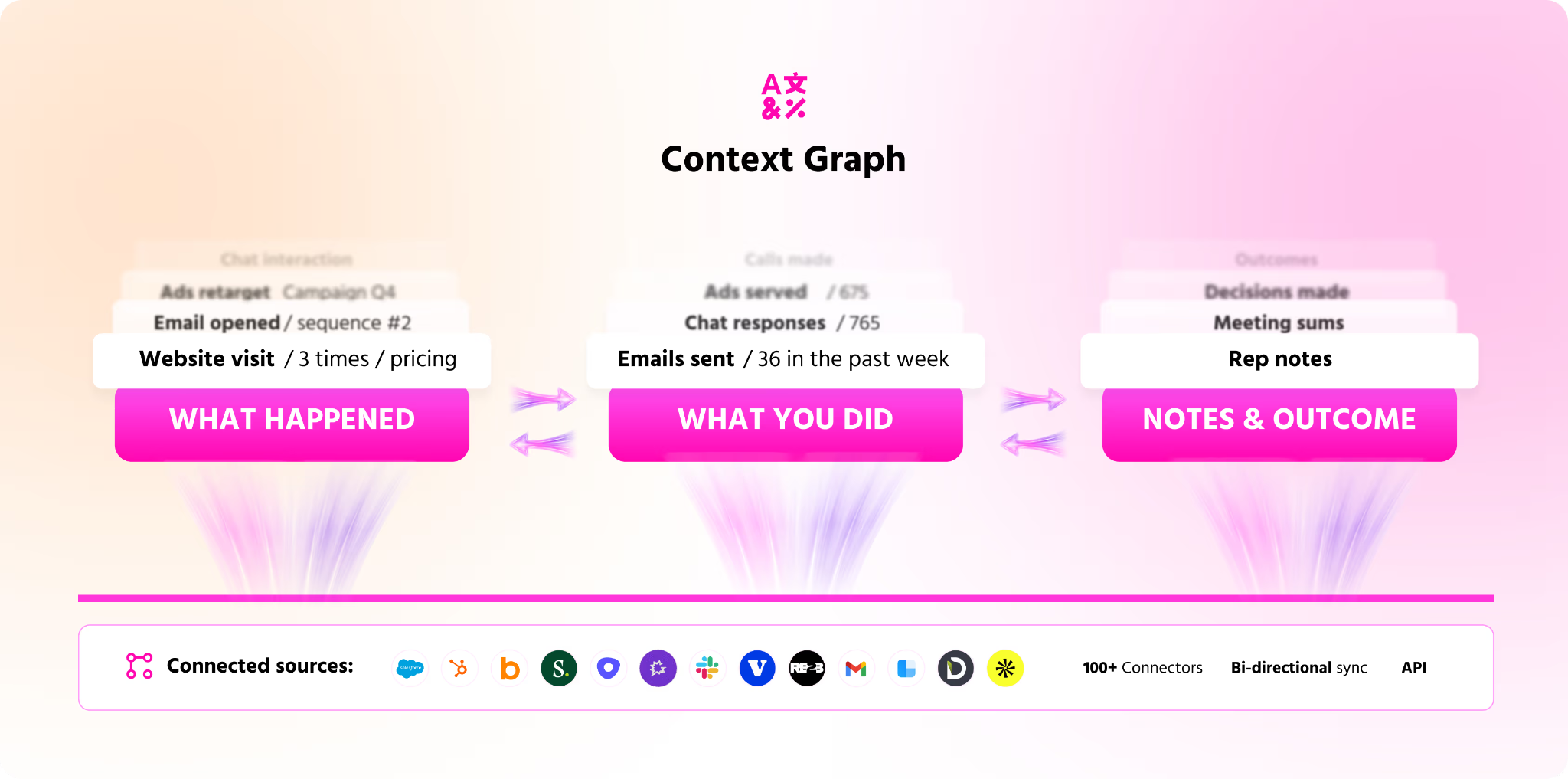

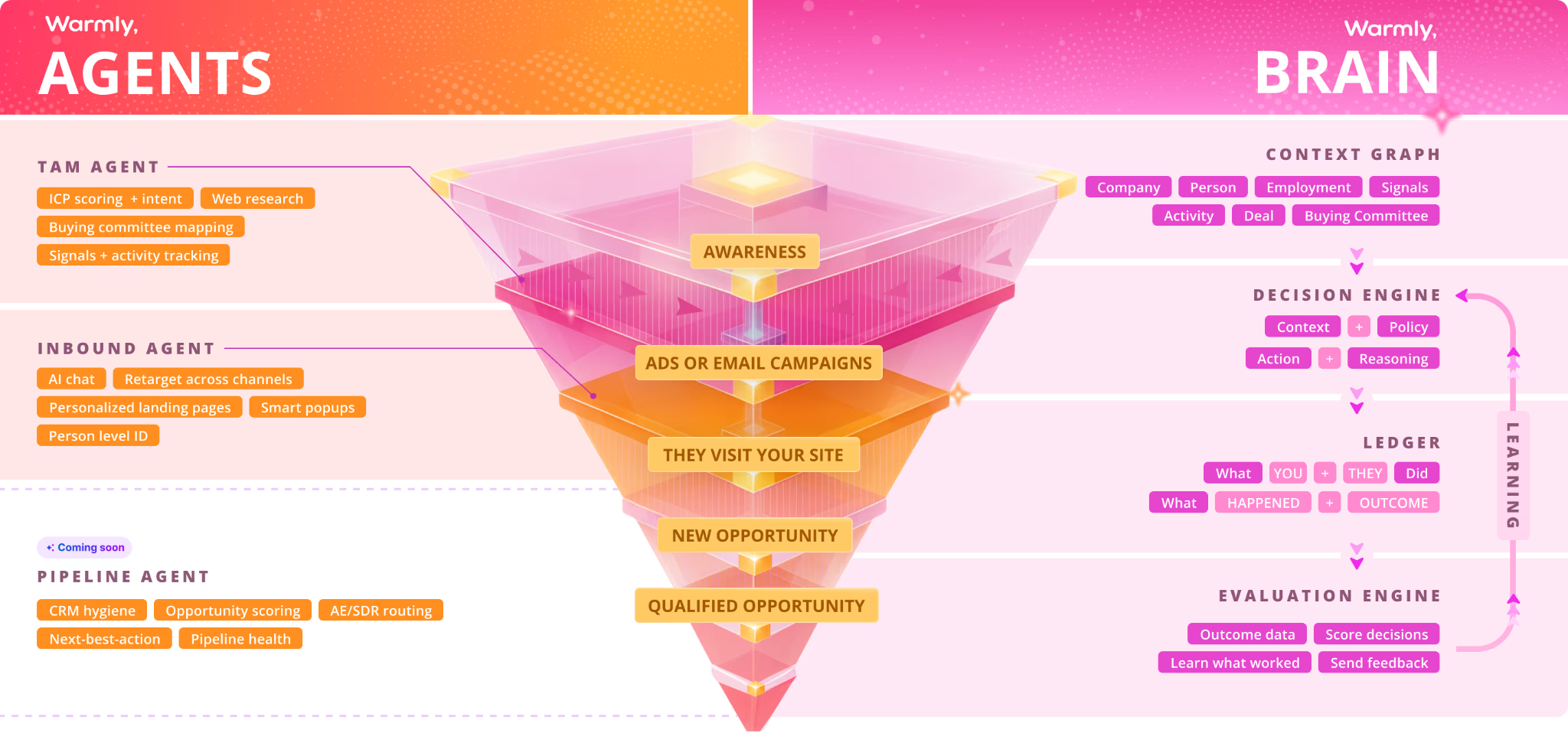

The foundation of that system is the Context Graph.

The Context Graph is not just a database.

It is not just enrichment.

It is not just visitor identification.

It is not just intent data.

It is not just CRM notes.

It is the memory layer for how revenue work actually happens.

Who visited the site?

What company are they from?

Are they in ICP?

What did they care about?

Who else from the buying committee showed intent?

What pages did they visit?

What ads did they see?

What emails did they open?

What did sales say last time?

What objection came up?

What competitor were they evaluating?

What use case matters?

What message converted?

What offer worked?

What action created pipeline?

What actually turned into closed-won revenue?

That is the Context Graph.

It is the shared memory of the revenue system.

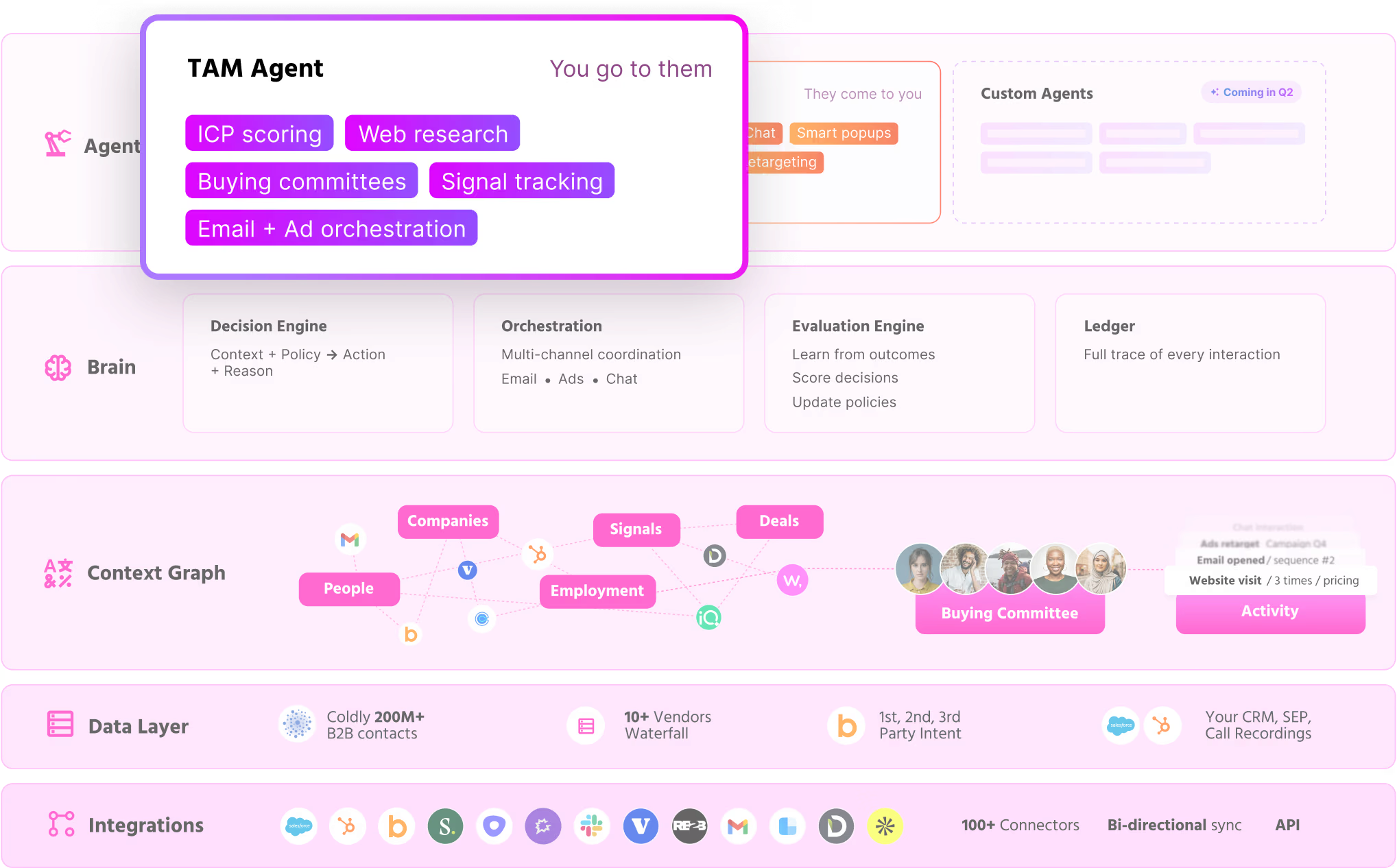

And on top of that memory layer, you can build agents.

Signal agents.

Inbound agents.

Outbound agents.

TAM agents.

Routing agents.

Research agents.

Follow-up agents.

Retargeting agents.

Meeting-booking agents.

Revenue orchestration agents.

But the agent itself is not the moat.

The moat is the system that lets the agent think with context, act with governance, learn from outcomes, and improve the next action.

That is why the Context Graph matters.

It lets every agent start from the collective learning of the entire revenue motion.

A sales call should make marketing smarter.

An ad conversion should make sales smarter.

A website visit should make outbound smarter.

A lost deal should make qualification smarter.

A closed-won deal should make the next campaign smarter.

Every interaction becomes part of the learning loop.

That is the difference between a workflow and a revenue brain.

A workflow executes steps.

A revenue brain learns which steps create outcomes.

This is also why companies cannot let every team build random AI workflows in isolation.

That creates GTM bloat.

One marketer builds a content agent.

One SDR builds a prospecting agent.

One AE builds a follow-up agent.

One RevOps person builds a routing agent.

One CS person builds an account-health agent.

At first, that feels like progress.

Everyone is moving faster.

Everyone has their own assistant.

Everyone is automating something.

But very quickly, the company has dozens of disconnected agents with different prompts, different data access, different approval logic, different memory, different logging, and different definitions of what “good” means.

The content agent does not know what sales is hearing on calls.

The outbound agent does not know what marketing just learned from ad conversion.

The routing agent does not know which accounts CS is worried about.

The sales follow-up agent does not know which messaging is currently working across the market.

The company ends up with more AI activity, but not more organizational intelligence.

That is the trap.

AI sprawl feels like leverage until it becomes another layer of operational debt.

The solution is not fewer agents.

The solution is a shared layer on top.

A common spine for context, memory, governance, approvals, model routing, observability, and outcomes.

Every new agent should make the whole system smarter.

Every workflow should feed the same memory layer.

Every action should be tied to a measurable outcome.

Every human approval should become training data.

Every win and every loss should improve the next decision.

That is what Warmly is building.

A context graph and learning system that powers all of GTM.

The marketing leader uses it to manage automated workflows, signals, messaging, campaigns, qualification, orchestration, and pipeline generation.

The sales leader uses it to manage people, accounts, relationships, trust, deal strategy, and the high-context human moments that still matter.

But both leaders are using the same revenue brain.

That is the key.

We will not have sales tools and marketing tools in the same way we had them before.

The thing that is sold needs to help both departments because both departments need to move in lockstep.

Both departments need to leverage the ability to unify learnings across the organization.

Sales needs the market intelligence marketing is creating.

Marketing needs the account intelligence sales is creating.

The agent fleet needs both.

This is why marketing becomes the operating system for the revenue learning loop.

Marketing owns the largest signal surface area.

Marketing sees the market before sales does.

Marketing sees anonymous demand.

Marketing sees intent before a form fill.

Marketing sees which messages resonate.

Marketing sees which segments engage.

Marketing sees which offers convert.

Marketing sees which campaigns create pipeline.

Marketing sees the top of the funnel where there is the most data, the most experimentation, and the fastest feedback loops.

In an agentic world, whoever owns the signal layer increasingly owns the learning loop.

And whoever owns the learning loop increasingly owns the revenue operating system.

This does not mean marketing replaces sales.

It means marketing expands from generating leads to governing the system that turns market signals into revenue actions.

Sales becomes more consultative, more strategic, more trust-based, and more focused on the moments where human judgment matters.

Marketing becomes the team that feeds, governs, and improves the revenue brain.

Sales remains the human trust layer.

Marketing becomes the signal and learning layer.

The shared system between them becomes the revenue operating system.

The revenue leader becomes the person who knows the domain deeply enough to direct the system.

They do not just manage campaign calendars and sales stages.

They manage the learning loops that decide which accounts to prioritize, which messages to test, which actions to automate, which moments require humans, and which outcomes matter.

This is the inevitable conclusion of the agentic GTM system.

Revenue teams stop being organized around who does which task.

They start being organized around how the system learns to produce revenue.

That is why Warmly is not simply building sales automation.

We are building the context graph and learning system for GTM.

A system that identifies demand, understands context, recommends action, executes where safe, routes to humans where trust matters, learns from outcomes, and compounds over time.

That is what ActiveCampaign should care about.

Because the GTM platform of the future will not be a sales tool or a marketing tool.

It will need to help both departments move in lockstep.

It will unify learnings across the organization.

It will understand the buyer.

It will know the account.

It will coordinate the journey.

It will trigger the right action.

It will govern the agent fleet.

It will learn from every outcome.

And it will make every seller, marketer, and customer-facing teammate more effective through the same shared revenue brain.

That is why marketing becomes the operating system for revenue.

We are moving away from functional jobs and toward scaled problem solving

The purpose of a GTM team does not change.

The purpose is still to grow revenue, increase retention, expand accounts, take market share, create viral loops, and do it efficiently.

What changes is the leverage available to solve those problems.

The number of emails that need to be sent will go down.

But there is an endless number of problems that need to be solved inside a company.

That will continue to grow, and as you grow you experience more and more problems.

Because AI does not have a soul, humans still need to manage it.

But AI will allow the human to do more.

This idea of scaled problem solving leads to a new SOP for the GTM team.

You will scale the problems that humans used to do with agents.

The maintenance of humans took a lot longer.

The purpose of the GTM team does not change.

Solving problems.

Diagnosing problems.

Working as a team to achieve goals.

The goals for the GTM team are grow revenue, grow retention, increase upsells, take market share, create viral loops, and do it efficiently.

You will never run out of problems to solve here because once you have maxed GTM loops, you realize the next lever of optimization might be product, segment, and then you are introduced to almost infinite permutations of things to try and problems to solve.

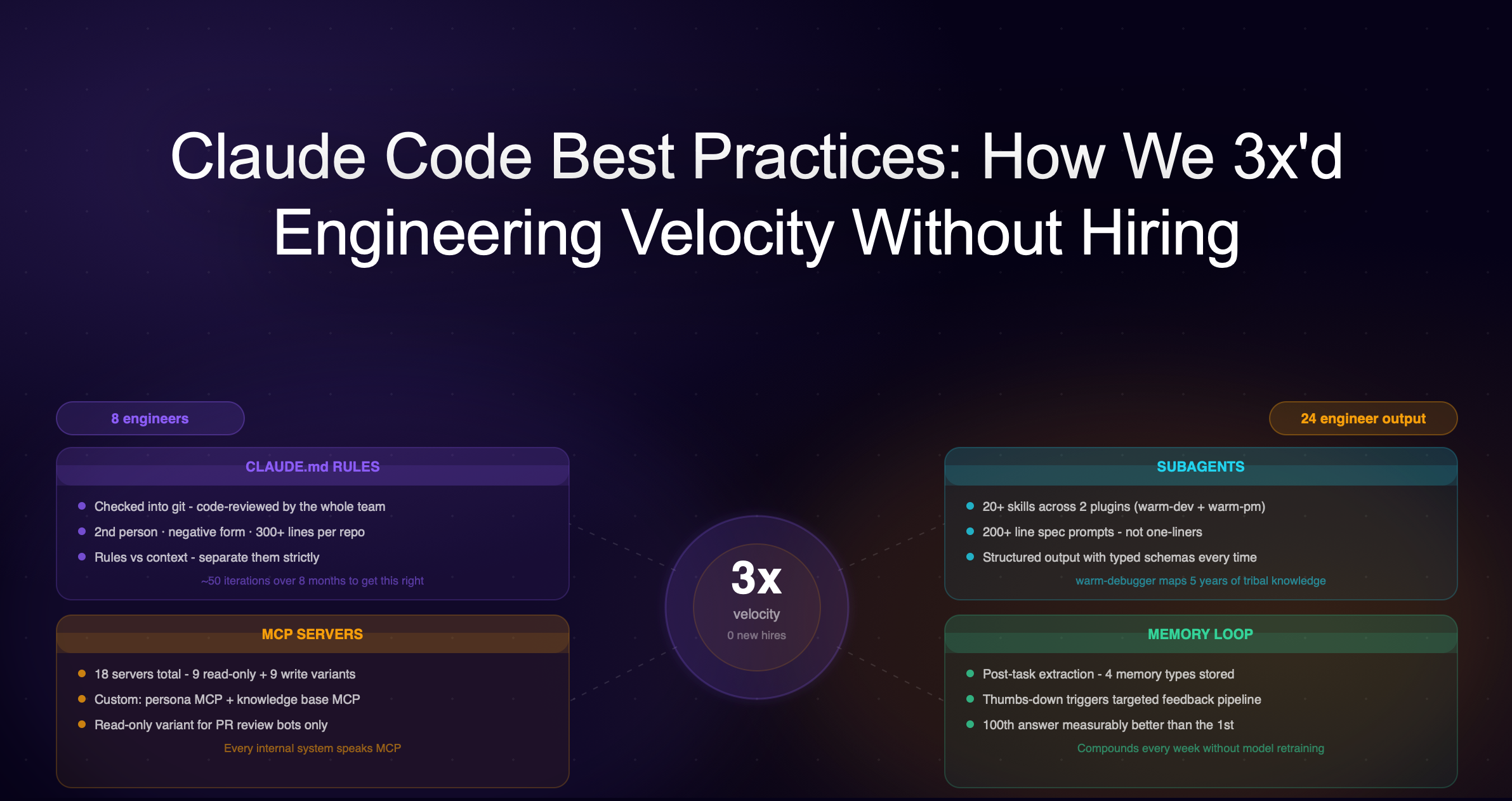

What is happening inside Warmly, and what I believe will happen in every company, is that the number of programmers is increasing even though other department headcount is decreasing.

Our GTM team is doing much the same thing as what our engineers are doing.

We create internal products using Claude Code off of our data, like AI SDRs, blog post writers, automated playbooks, and call coaching.

We are systematically building our own SaaS to solve our own specific pains.

Simultaneously, our engineers are building features so quickly that good planning has become the bottleneck.

It is no longer about writing code.

They are now tasked with achieving customer outcomes with the software they ship.

And each engineer is in charge of a different outcome.

In a way, the GTM team as a whole is doing the same thing engineers are doing.

We are leveraging AI to solve growth problems, while our dev team is building to solve customer problems.

But the process is the same.

Diagnose.

Plan.

Build.

Ship.

Evaluate results.

And so much of this is done interfacing with Claude Code, Codex, OpenClaw, ChatGPT, Cowork, AI image generators, and Claude Design.

The number of engineers in the company has increased.

Because the role of engineering is to solve problems to achieve a goal.

For us, engineering was never about writing and maintaining code.

What is the definition of coding?

Describing a specification for a computer to go build.

How many people are doing that now?

Probably went from 30 million to one billion.

I recently went to Macedonia to show our SDR, ops, and CS team how to use Claude Code.

Every SDR, ops, and CSM person there is now a coder.

Except an SDR with AI is not just a coder.

They are also an architect.

Their scoped problem has always been the same: increase outbound pipeline generation.

But now they just increased the value they can deliver to the team because they are scaling out their manual tasks with AI that has the ability to clone itself.

It was an amazing experience to meet the team in person and see firsthand how creative everyone was.

Their artistry has now been elevated beyond repetitive tasks of researching accounts and sending cold emails.

Ryan and Keanen, our CS rockstars who handled a lot of our renewals, built a tool using Claude Code to see exactly where every account is by ingesting call transcripts, product usage, engagement sentiment scores, and what the follow-ups are to do next.

The follow-ups are what they are now automating with AI as well.

Lauren, our head of sales, used Claude Code to essentially recreate the best aspects of Gong’s call coaching from Sybill’s call recorder.

Because her app has full context over CRM, SEP, and all of our sales systems, she has visibility into the health of every deal for her AEs and can create checklists for AEs to approve.

Once approved by adding a checkbox, the AI has a scheduled job to complete the task, like updating the pipeline deal stage or creating the deal.

These are things sellers should not be focusing their time on, but sales leaders absolutely need to understand if we are tracking toward revenue goals.

Lina, our marketing manager, uses Claude Design to make the launch video for our AI Autopilot agent, something that used to require us to bring on a specialized video content agency for thousands.

She built it over the course of a couple hours and less than $100 in token cost, inputting screenshots and prompts.

Every single role inside Warmly has just been elevated with AI.

If your job is the task, it will be disrupted by AI.

If your job includes those tasks, it is important to use AI to automate those tasks.

And you stay as the architect to solve higher and higher level problems up the value chain, typically requiring more context and where there existed no reinforcement learning loop in a controlled environment.

The three human jobs that matter more

I see three main jobs of humans as we move forward.

First, if you are a deep expert of a domain, it becomes more about being an orchestrator for AI agents.

We need our head of sales Lauren because if she is going to orchestrate the team of SDR agents and RevOps agents to do lower-context jobs that humans with a bit of guidance used to do, she needs to understand what to tell them to do in the company.

I will use these agents as well to do the long-tail of marketing needs, like finding negative keywords for search ads or negative title matching for LinkedIn ad campaigns.

It just was not worth my time before, but finally AI can go do those things well.

Both Lauren and I need to have a deep understanding of what questions to ask, what problems to solve, and what to try.

We also need to know the constraints of what AI is capable of, and just as important, what it is not capable of doing but might seem like it is doing well.

And we need to know what humans are capable of, so we know where to swap them in.

Second, you need someone like Lina, who is our AI-pilled marketing manager.

She does not have the same domain expertise or years of experience as Lauren to always know what to do, but she builds agents on the weekend and is staying up trying the latest tools and reading the latest X threads to be at the cutting edge of how to orchestrate agents to achieve high leverage.

She is a force multiplier for the business and one of the reasons our pipeline was able to 3x in a month in March 2026 when our budget and headcount in the GTM team was cut to a fraction.

You need to combine these two types of expertise to represent the human layer of your AI system.

Deep domain expertise.

And cutting-edge AI orchestration.

The third human job is people who have extremely high IRL people skills, like Max and Keegan.

We still work with people.

We greet them in real life.

We build relationships with them that create mutual gain.

Those relationships multiply the effects of the AI system by reducing friction or creating more access to data, power, and customers.

We still need communities.

And this becomes a more important role for humans.

Over time, if you imagine even a 10% rate of compounding improvement in AI, you start to see more and more rungs of the context hierarchy of problems being solved by agent workforces.

Humans move further up into solving the highest-context problems and continuing to answer what should we do, with AI executing.

Humans still need other humans though.

We get sick if we do not get human connection to make ourselves feel less lonely.

But after adding recursive context, the human role becomes sharper.

Humans are not needed because agents cannot remember.

Agents may eventually remember better than humans.

Humans are needed where there is no reliable reinforcement learning loop yet.

The more a workflow has a reinforcement loop, the more agentic it becomes.

The less a workflow has a reinforcement loop, the more human judgment, taste, creativity, and trust still matter.

In GTM, AI will move fastest into high-volume, structured, verifiable workflows first.

AI SDR.

AI inbound qualification.

AI follow-up.

AI pipeline hygiene.

AI demos for lower-ACV products.

AI AEs for transactional sales.

AI sending credit card links.

AI answering product questions.

AI routing accounts.

AI monitoring intent.

These are workflows where the system can observe the input, take an action, measure an outcome, and improve the loop.

But humans remain more important in areas where the environment is not fully observable or repeatable.

Enterprise sales.

Strategic account navigation.

Brand.

Category design.

Founder-led storytelling.

IRL relationship building.

Executive trust.

Creative taste.

Market timing.

Political navigation.

New category creation.

Finding points of scarcity.

Identifying alpha before it becomes obvious enough for AI to optimize.

These are the places where the data does not exist yet, the reinforcement loop is weak, the taste is subjective, the trust is human, and the right answer may have never existed before.

This is also why, as we are hiring more people, the hardest role to hire for is not a BDR, SMB AE, marketer, or VP Sales/CRO.

It is the enterprise strategic AE who is adapting to this new AE reality.

People will still want to buy from people because ultimately you are paying for trust in a world of AI slop.

Memory, trust, and the new vendor moat

Look at what happened to Klarna.

Klarna is not a perfect example, and it should not be treated as a clean story of “AI replaces everyone and everything gets better.”

But it is one of the clearest early examples of what happens when a company aggressively uses AI to compress headcount, increase revenue per employee, and rethink how much work needs to be done by humans.

In 2024, Reuters reported that Klarna had reduced active positions from about 5,000 to 3,800 over roughly 12 months, mostly through attrition rather than layoffs.

Klarna said its AI assistant was doing the work of 700 employees and reducing average customer service resolution time from 11 minutes to two minutes.

Over that same period, Klarna said revenue per employee increased 73%, from 4 million Swedish crowns to 7 million.

Then in 2025, Klarna said its headcount had dropped from 5,527 to 2,907 since 2022, mostly from natural attrition and technology replacing roles rather than new hires.

The company said technology was carrying out the work of 853 full-time staff, up from 700 earlier that year.

Over the same period, Klarna said revenue had increased 108% while operating costs stayed flat.

By Q3 2025, Klarna reported record quarterly revenue of $903 million and said it expected to exceed $1 billion in revenue in Q4.

Again, this is not a perfect story.

Klarna also learned the limits of automation in customer-facing work and had to bring back more human options in support when quality mattered.

That is exactly the point.

AI does not eliminate humans everywhere.

It compresses the work where the loop is structured, measurable, and repeatable.

It exposes where humans still matter because the work requires trust, empathy, quality, judgment, or context the system cannot yet reliably handle.

So the lesson from Klarna is not “fire everyone.”

The lesson is that when AI systems are deployed aggressively, the revenue-per-employee frontier can move very quickly.

Companies can do more with smaller teams.

But only if they understand which work

Deciding what to build, for who, when, and how to market to them is not a controlled environment.

Especially now, more than ever, the world is changing so fast, and the leverage to accomplish something is so vast, that you cannot afford to be making the wrong bets.

The power law is massive.

A small number of companies will grab everything because intelligence scales and generalizes so well, and it is only getting better.

Everyone in tech, including myself, is incentivized to remove friction from AI consuming as much data as possible.

So we build MCPs and APIs into our apps.

Even Salesforce has announced that it is going headless, which means they are building for agents to do work and are not optimizing for people clicking around in apps or UI.

The models are generating smarter levels of intelligence, so pre-training, post-training, test-time inference, and agentic scaling see big lifts.

And they are doing it for cheaper.

The cost of compute is rapidly decreasing thanks to the cost of energy decreasing through advancements from AI or chip and data center design and production.

That means token costs are decreasing.

That means intelligence as a commodity is decreasing in cost.

When the cost of energy goes down, the cost of building anything goes down significantly, and we enter an age of abundance to solve even more problems.

Memory and thinking are being solved to solve problems at inference time better.

The pool of problems that can be solved by AI well will have to do with how quickly you can build the reinforcement loop of creating an environment to accurately train the AI to solve.

The problems that are left are the ones where you cannot create an environment, because these are new problems we have never seen.

This happens daily.

Because of these learning loops and scaling laws provided by AI, you will see a world where AI trained to your organization can be highly effective at delivering outcomes that are better and faster than humans were able to achieve if tasked to a human.

So the analogy for why you would stay with a vendor becomes similar to why you would stay with an employee.

Because they deliver the outcomes you need.

And you like the way they deliver it.

This mechanism can be simulated, replicated, and made more effective over time by AI as the AI lives inside your organization and builds the feedback loop of thinking, acting, observing outcomes, and learning from the outcome and decision traces.

The data that the AI accrues from performing a job well over the course of time inside an organization becomes proprietary information to the company and a reason to retain the vendor.

Why would you fire an employee if they are doing a great job and potentially risk bringing on a different vendor, losing all that context and organizational know-how?

Also, the other vendor could just be AI slop.

It is risky.

This is the real moat.

Not just data.

Not just workflow.

Not just model quality.

Permissioned memory.

A trusted AI system that has been allowed to operate, observe, learn, and improve inside the enterprise.

That is much harder to replace than a dashboard.

And this is why the infrastructure layer matters so much.

That memory only compounds if the company has the architecture to capture it, govern it, route it, audit it, and turn it into better decisions.

No shared orchestration layer means no shared memory.

No shared memory means no compounding intelligence.

No compounding intelligence means no moat.

How companies win

It is an inevitable future as all companies race to create the hive-minded brain that will try to grab as many problems to solve and solve them better agentically than their nearest competitor.

What drives the most revenue for a company is also what happens to diffuse AI into the economy the fastest.

We will all be on this treadmill for a while, because even if the US wants to slow down the pace of AI advancement because of public fears, China will keep going.

Someone is going to do it, which means we all have to.

So how does a company win in this environment where everyone is trying to create super AGI, whether directly or in their domain?

Companies need to find points of leverage faster and focus their AI scaling laws on that.

The power laws are only getting stronger, where 90% of the outcome is typically driven by 10% of the input.

To win, you need to focus 99% of the input on the 10% that delivers the 90%.

This means you can do more, and there are more paths to go down.

But it also means there are fewer paths that lead to the outcome you want, because everyone is doing it.

So 1% of paths will lead to the 99% outcome.

Finding that is not something AI can do yet.

If you are in the competitive rat race of growing a venture-backed company, this is where your humans should spend most of their time.

So when we are considering whether to hire someone in sales, CS, or marketing, we always give a big advantage to the person who is an expert in AI.

Because they will have the ability to elevate themselves and be the innovator to revolutionize the industry, whatever the function.

What is intelligence?

It is not a word that equals humanity.

It is a commodity.

We are surrounded by intelligent people.

I am surrounded by people who are more intelligent than me in their domains.

They are deeper in any of the fields that they are in, especially engineering.

Some of them I think are superhuman.

And yet I have a role inside the company helping to shape a vision and mobilize everyone toward that shared vision.

Intelligence is a functional thing that we have created.

Humanity is not specified functionally.

Our life experience, tolerance for pain, determination, compassion, and generosity are superhuman powers.

Because intelligence is starting to become commoditized.

And being less intelligent in the traditional sense to my peers does not mean I will be any less successful than them in the old world or the new.

Democratization of intelligence is not something we should be afraid of.

We should be inspired by it.

AI is an incredibly powerful tool to make humanity even more powerful.

![10 Best MarketBetter Alternatives & Competitors [2026]](https://cdn.prod.website-files.com/6506fc5785bd592c468835e0/69f518317a132616ee9c03bc_marketbetter%20alternatives.png)

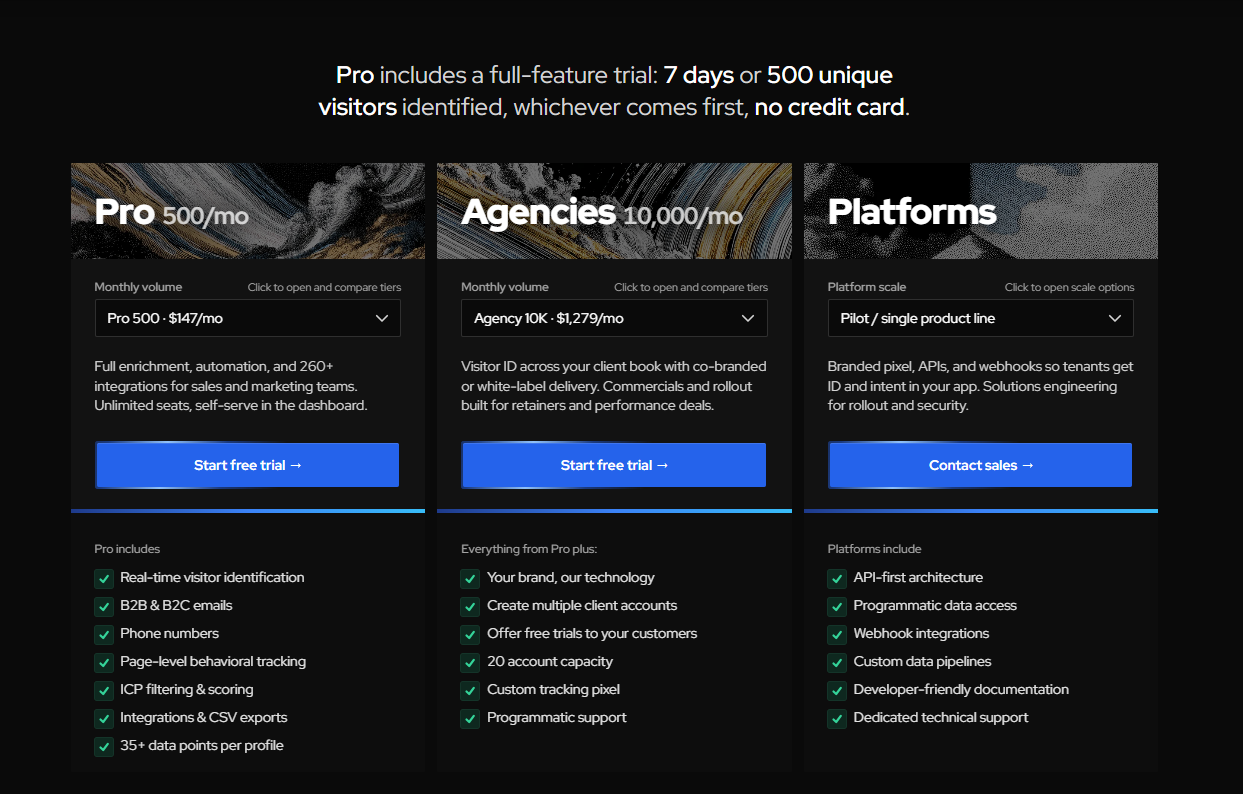

![10 Best Leadpipe Alternatives & Competitors [2026]](https://cdn.prod.website-files.com/6506fc5785bd592c468835e0/69ec6ab4fdf14caa3d8cb57d_10%20Best%20Leadpipe%20Alternatives%20%26%20Competitors.png)