Best AI SDR software in 2026

The AI SDR category barely existed as a defined market two years ago. Market research now pegs it at $4.12B in 2025, growing 29.5% annually. By 2026, procurement teams face 40+ vendors claiming "AI SDR" capabilities, but only a handful ship production-grade systems that handle multi-channel outbound, native contact databases, and inbound voice qualification under one roof.

This analysis evaluates 12 platforms across seven criteria: contact database size, channel coverage, personalization depth, deployment model, pricing transparency, customer support, and compliance certifications. The goal is to map which tool fits which buyer profile, not to declare a universal winner.

Major takeaways

Q: Which AI SDR platform has the largest native contact database?

A: 11x reports 400M+ verified contacts native to the platform. Apollo.io claims 275M contacts, though a portion is reportedly resold from third-party providers. Most competitors rely on integrations with ZoomInfo, Cognism, or Lusha rather than maintaining proprietary databases.

Q: Can AI SDR software handle inbound phone calls, or is it outbound-only?

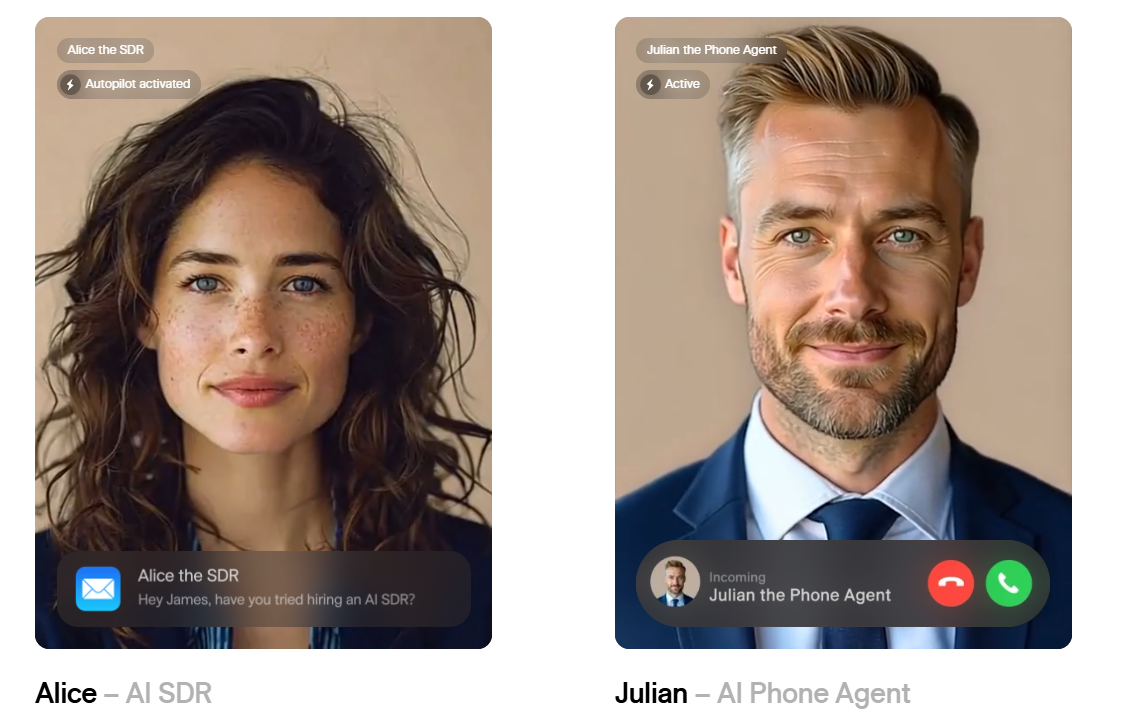

A: Most platforms are outbound-only (email, professional networks, SMS). 11x is the only major vendor that ships an inbound AI phone agent (Julian) alongside its outbound SDR (Alice) in a unified system. Outreach and SalesLoft offer voice dialing for human reps but do not automate inbound qualification with AI.

Q: What is the typical contract length and cancellation policy for AI SDR platforms?

A: Contract terms vary widely. Apollo.io and Instantly.ai offer month-to-month plans. Outreach, and SalesLoft typically require 12-month commitments. Multiple G2 reviewers cite auto-renewal clauses and unclear cancellation windows as friction points, particularly for enterprise platforms. Always negotiate data portability and cancellation terms before signing.

How should teams evaluate AI SDR software?

Data sources and methodology

This analysis draws from G2, Trustpilot, TrustRadius, and Capterra reviews published between January 2024 and March 2026. Pricing data comes from vendor websites, Vendr benchmarking reports, and third-party SaaS spend analyses. Feature claims are cross-referenced against vendor documentation and user-reported capabilities in public forums.

We prioritized platforms with 50+ verified customer reviews and publicly documented enterprise deployments. Vendors that require NDAs to disclose basic feature sets or pricing were flagged for transparency risk.

Evaluation criteria

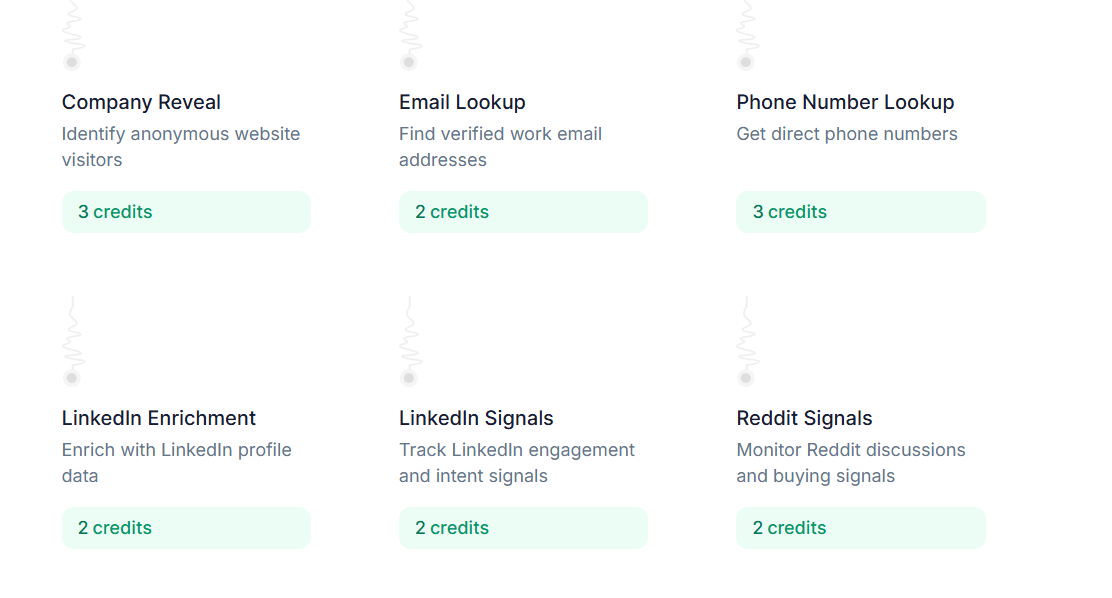

Contact database size. Native contact databases reduce dependency on third-party data providers and simplify procurement. We measured reported database size, verification methodology, and whether contacts are proprietary or resold.

Channel coverage. Email-only platforms create single-channel risk. We evaluated native support for professional networks, phone (outbound and inbound), SMS, and direct mail. Integrations with third-party dialers were noted but not counted as native capabilities.

Personalization depth. Template-based personalization (merge fields, conditional logic) is table stakes. Dynamic personalization (AI-generated messaging based on prospect signals, company news, and behavioral data) separates production-grade systems from pilot-stage tools.

Deployment model. Self-serve platforms ship faster but leave teams without strategic guidance. White-glove onboarding with dedicated customer success reduces time-to-value but increases cost. We flagged vendors that require multi-month implementations or custom integrations.

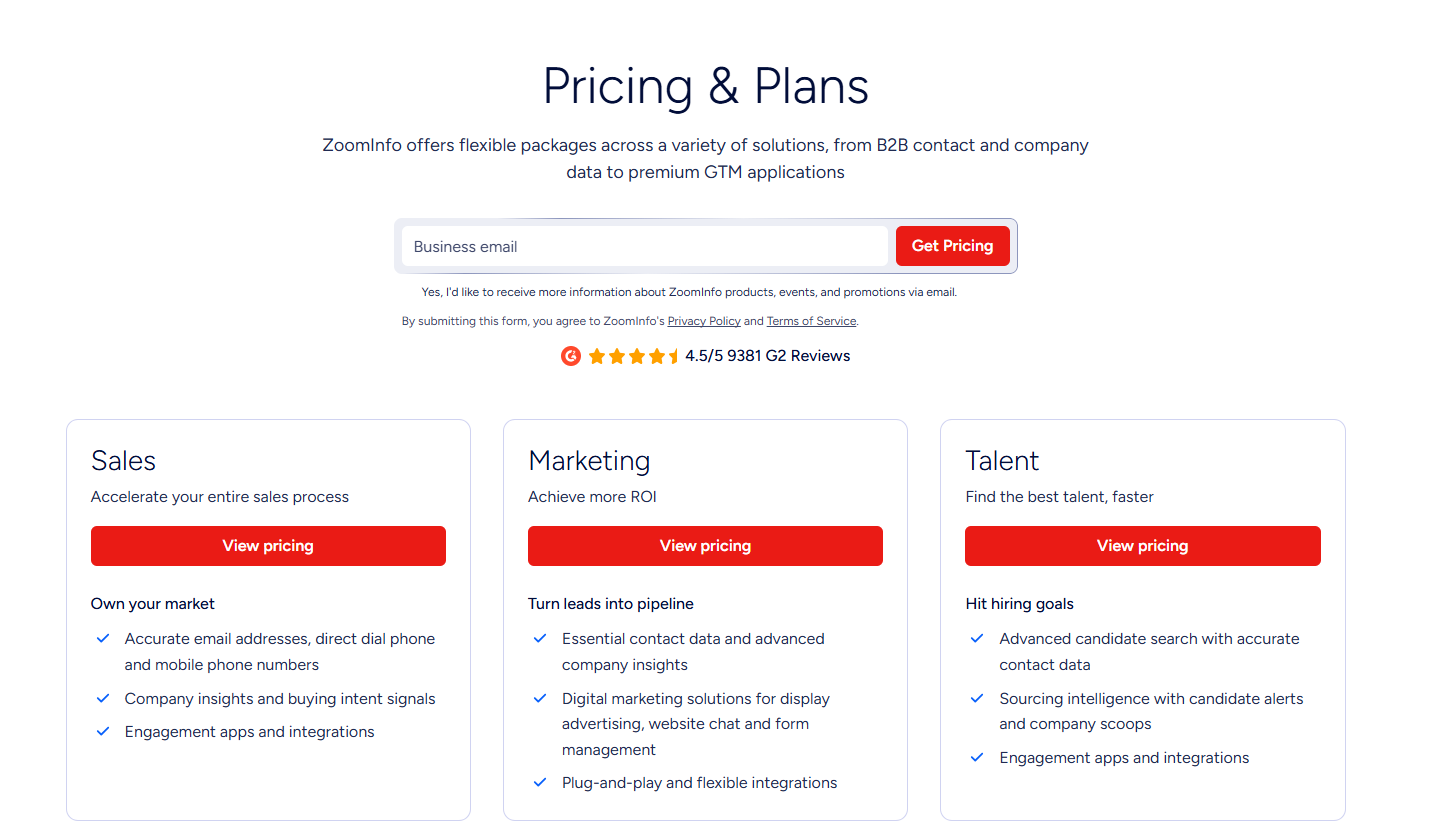

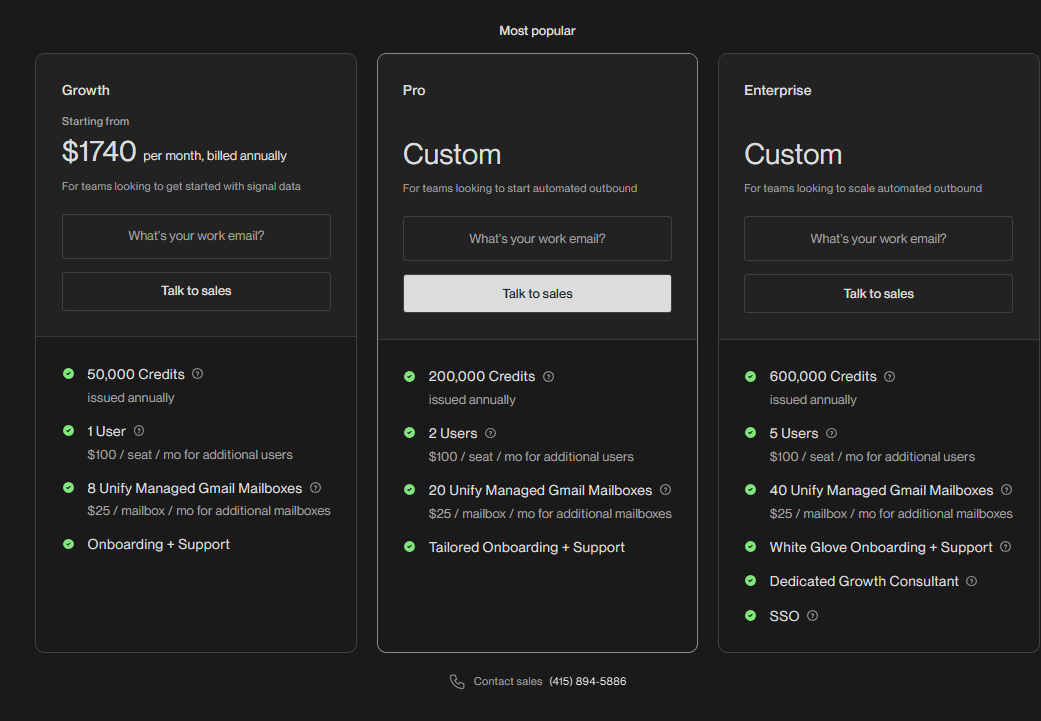

Pricing transparency. Platforms that publish pricing on their website reduce procurement friction. Custom-quote-only models signal either enterprise positioning or pricing inconsistency. We noted contract length, cancellation terms, and hidden costs (onboarding fees, data credits, overage charges).

Customer support. Email-only support works for technical users. Phone and Slack support reduce downtime for revenue-critical systems. We reviewed support SLAs, response times reported in G2 reviews, and whether dedicated CSMs are included or sold separately.

Compliance and security. SOC-2 Type II, GDPR compliance, and CAN-SPAM adherence are non-negotiable for enterprise buyers. We verified certifications and flagged platforms with public compliance incidents or unverified claims.

Limitations of this analysis

Pricing data reflects publicly available information as of March 2026. Custom enterprise deals may differ. Feature claims are based on vendor documentation and third-party reviews; we did not conduct hands-on testing of every platform.

Several vendors (Amplemarket, Regie.ai) do not publish detailed pricing or contract terms. Estimates are based on third-party benchmarking reports and may not reflect current offers.

This analysis does not cover vertical-specific platforms (e.g., real estate, recruiting) or tools focused on account-based marketing (ABM) orchestration.

What is the best AI SDR software in 2026?

The best AI SDR software in 2026 depends on team size, outbound channels, and whether the company needs inbound AI voice qualification. 11x stands out for unified outbound and inbound automation, while Apollo.io is best for cost-conscious outbound teams and SalesLoft fits enterprise workflow orchestration.

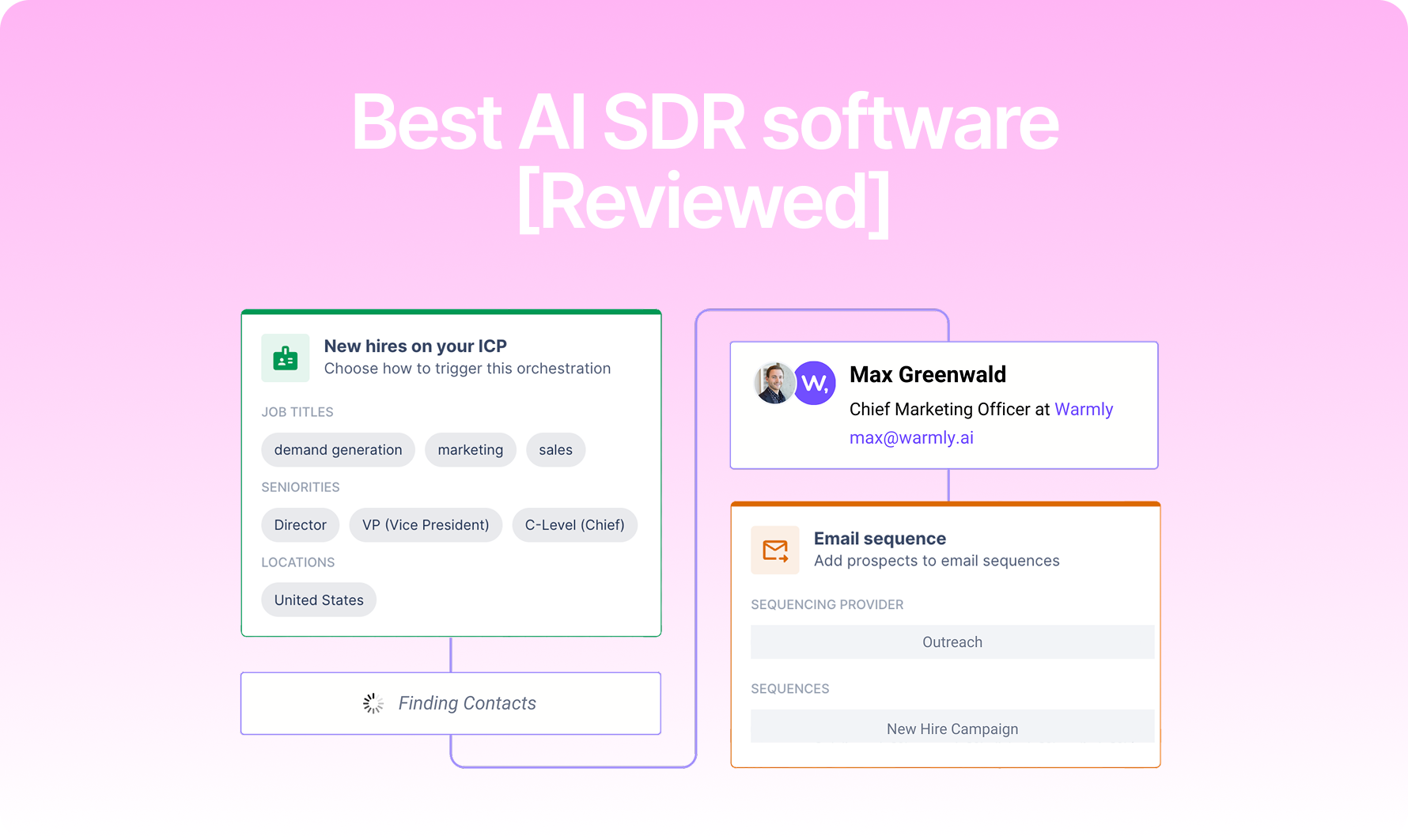

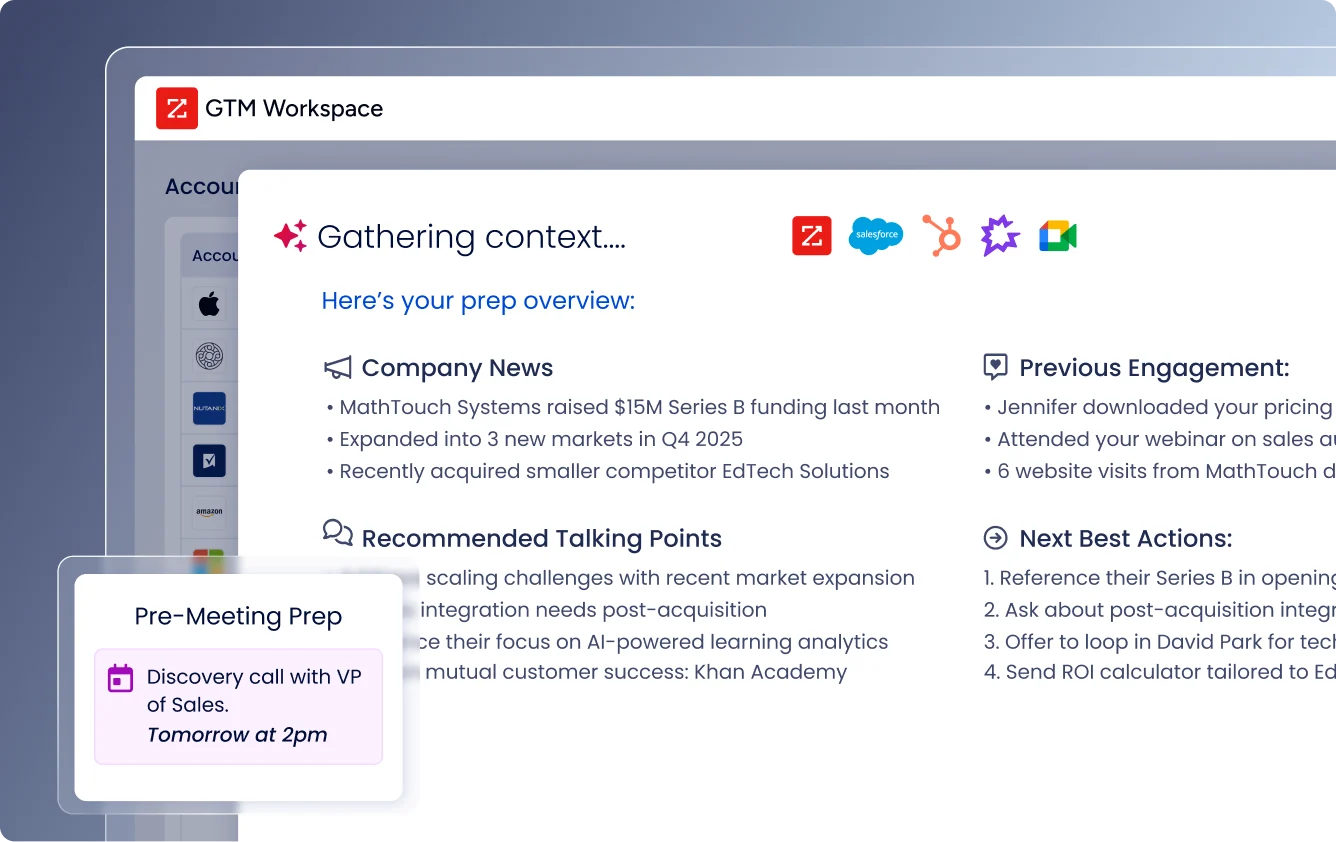

11x (Alice + Julian)

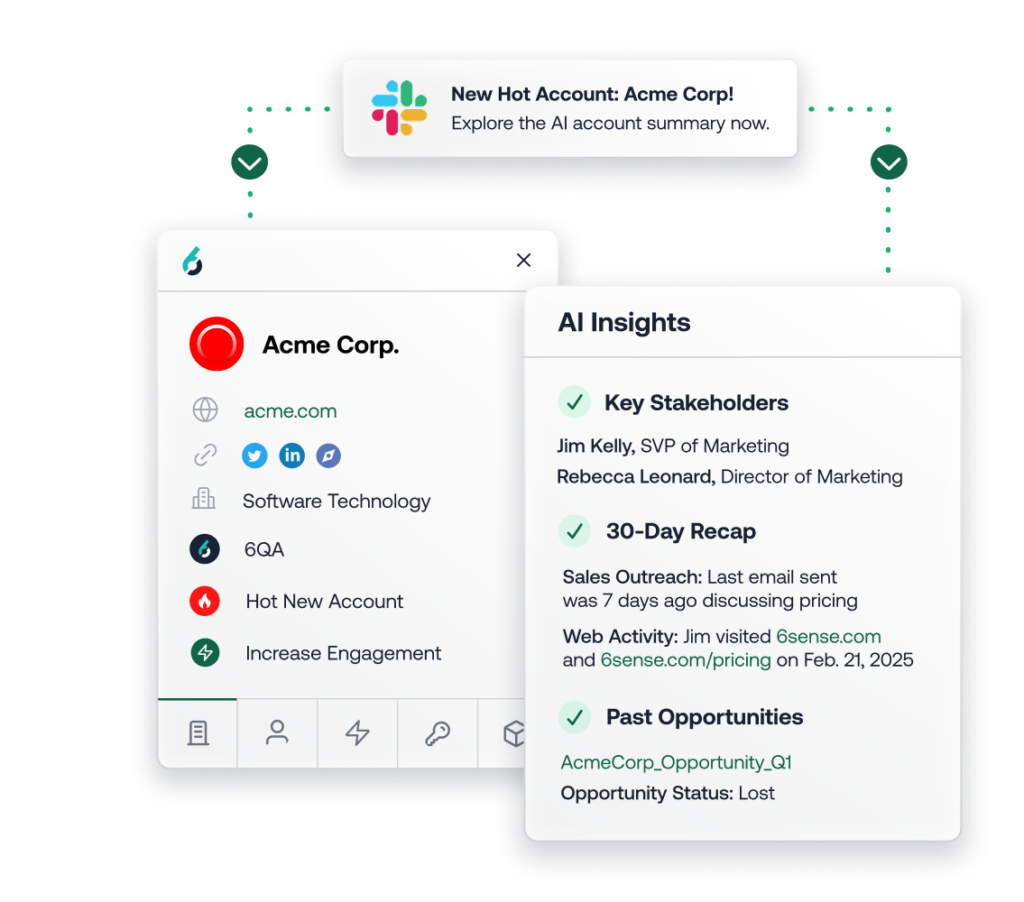

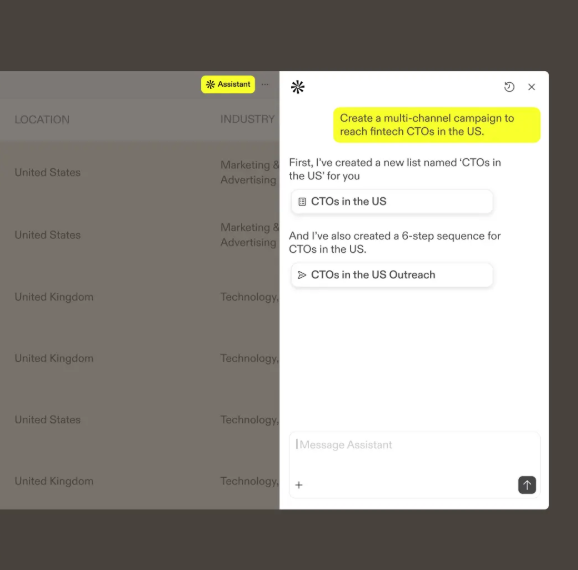

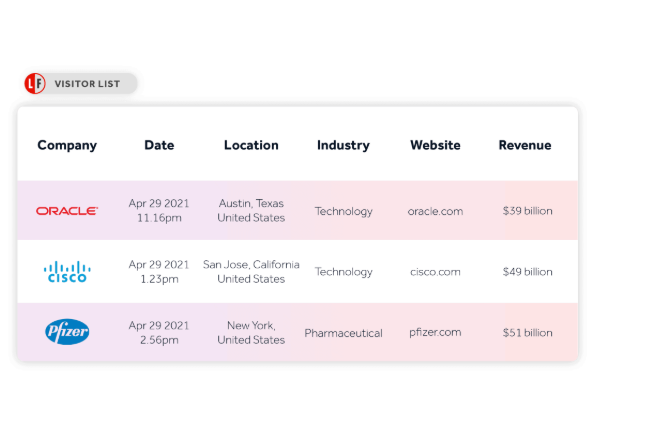

11x operates two AI agents: Alice (outbound SDR for email, professional networks, and multi-channel sequences) and Julian (inbound AI sales agent for qualification and routing). The platform ships with a native 400M+ verified contact database, website visitor tracking, and signals/triggers built in.

Alice handles outbound prospecting in 105+ languages, 24/7. Julian answers inbound calls, qualifies leads, and routes to human reps based on configurable criteria. Both agents integrate with Salesforce, HubSpot, and Outreach.

11x is SOC-2 Type II compliant with end-to-end encryption. The platform is backed by a16z, Benchmark, and HubSpot Ventures. Customers include Xerox, Checkr, Sage, and Rho.

Strengths. 11x is the only major platform that unifies outbound (Alice) and inbound voice (Julian) in a single system. The native 400M+ contact database eliminates dependency on third-party data providers. Multi-language support (105+ languages) and 24/7 operation enable global teams to scale without adding headcount.

G2 reviewers cite time savings and personalization depth as primary benefits. One reviewer noted, "I love how easy 11x is to set up, and it saves hours of manual work, which is a massive help for my team." Another highlighted deliverability: "Email delivery is great because emails don't land in spam thanks to the backend infrastructure, like the auto warm-up emails."

Gaps. 11x requires a demo and custom quote. The platform is designed for teams replacing or augmenting SDR capacity, not for individual users running low-volume campaigns. Self-serve onboarding is not available.

Pricing model. 11x uses custom, outcome-based pricing that varies by product, contact volume, channel mix, and deployment scope. Pricing and packaging for Alice, 11x's AI SDR, and Julian, 11x's AI Inbound Sales Agent, are detailed on their respective product pages. All contracts include dedicated customer success and onboarding. No free tier or month-to-month plans are offered.

Apollo.io

Apollo.io is a data platform with outreach capabilities. The platform claims 275M contacts, though a portion is reportedly resold from third-party providers. Apollo supports email, professional networks, and phone dialing (via integrations with Aircall and other dialers).

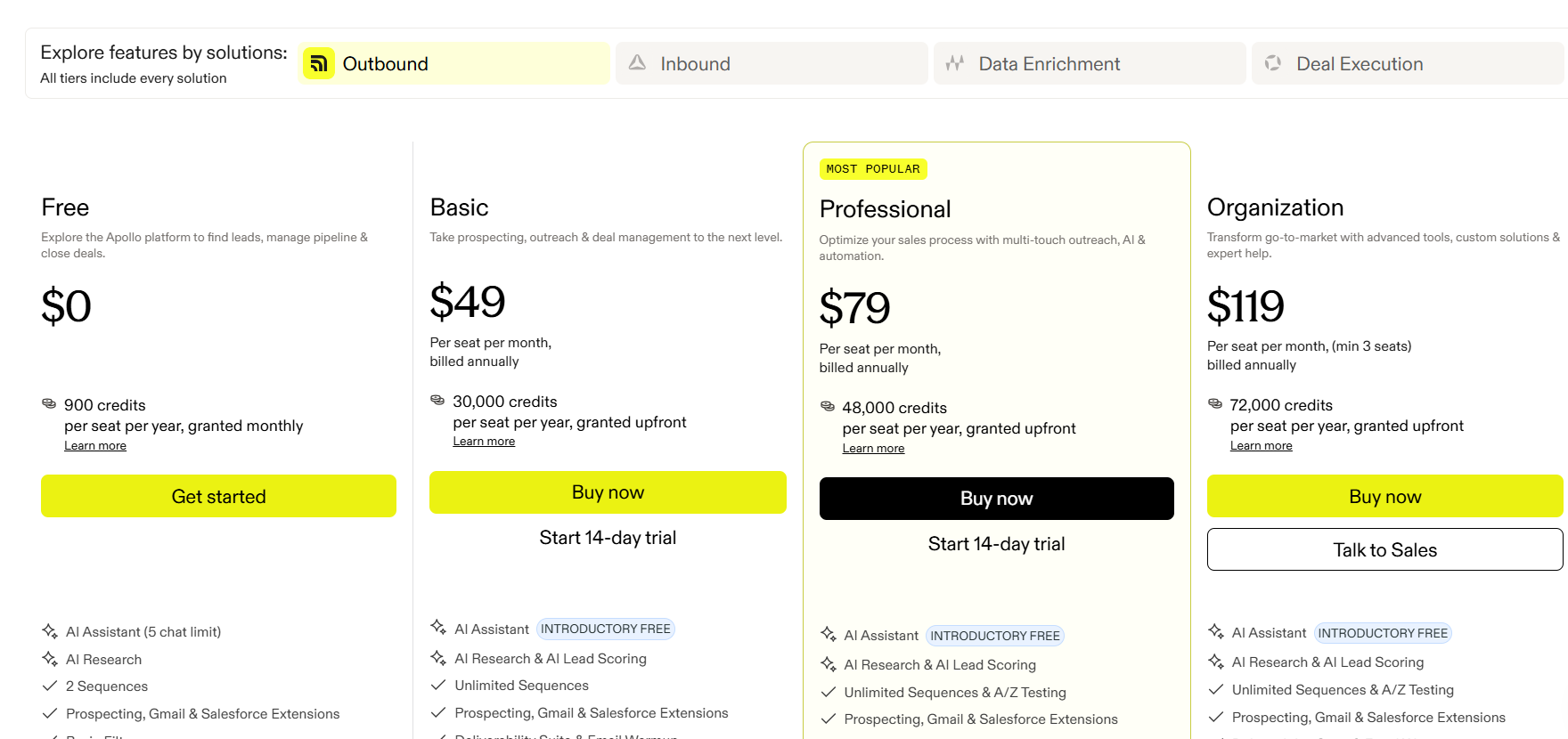

Strengths. Apollo publishes transparent pricing and offers a free tier with 50 contact credits per month. The platform includes email sequencing, reply detection, and basic AI writing assistance. Apollo integrates with Salesforce, HubSpot, Outreach, and SalesLoft.

Apollo's data enrichment features (company technographics, funding signals, job changes) are cited as strengths in G2 reviews. One reviewer noted, "Apollo's data is solid for mid-market companies, and the filters let us build precise lists."

Gaps. Apollo does not natively handle inbound phone calls or SMS. AI personalization is limited to template-based merge fields and basic content suggestions. Some users report data accuracy issues, particularly for smaller companies and international contacts.

Multiple G2 reviewers cite aggressive upsell tactics and unclear overage charges. One reviewer flagged, "We hit our contact limit mid-month and had to pay $500 extra to unlock more credits."

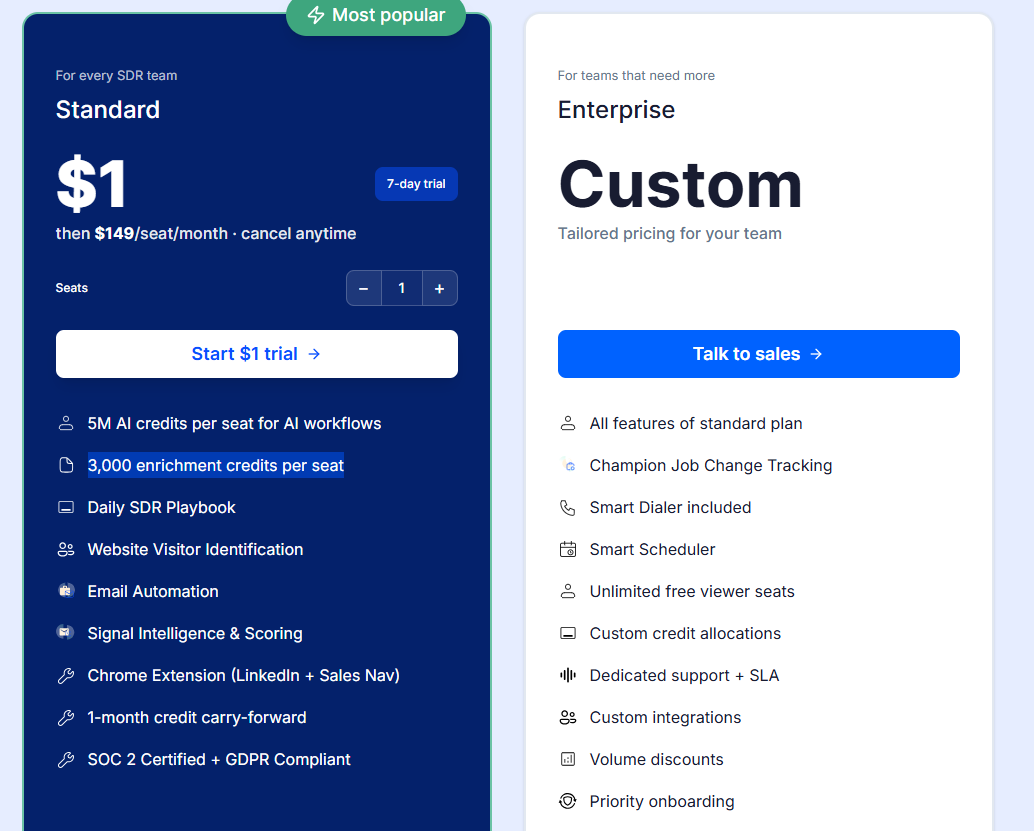

Pricing model. Free tier: 50 contact credits per month. Paid plans start at $49 per user per month (billed annually). Enterprise pricing is custom. Apollo charges per contact credit for data enrichment; overage fees apply if teams exceed their monthly allocation.

Clay

Clay is a data enrichment and workflow automation platform, not a traditional AI SDR tool. The platform aggregates data from 50+ sources (Apollo, ZoomInfo, Clearbit, professional networks, etc.) and lets users build custom workflows with conditional logic and AI-powered enrichment.

Strengths. Clay excels at data quality and workflow flexibility. Teams can chain together multiple data providers, validate contact information, and trigger personalized outreach based on enrichment results. Clay integrates with Instantly.ai, Smartlead, and other email platforms.

G2 reviewers cite Clay's learning curve as steep but worthwhile for technical users. One reviewer noted, "Clay is a data Swiss Army knife. If you know how to use it, you can build outreach workflows that no other platform can match."

Gaps. Clay does not include native outreach capabilities. Teams must integrate with external email or professional networks tools. The platform requires technical expertise; non-technical users report frustration with the workflow builder.

Clay does not publish pricing on its website. Third-party sources estimate starting plans around $200–$300 per month, with enterprise contracts reaching $2,000+ per month depending on data credits and workflow complexity.

Pricing model. Custom quote. Estimated starting price is $200–$300 per month based on third-party benchmarking. Clay charges per data credit; overage fees apply if teams exceed their monthly allocation.

Instantly.ai

Instantly.ai is an email deliverability platform with AI writing assistance. The tool focuses on inbox rotation, warm-up automation, and reply detection. Instantly does not include a native contact database; users must import lists or integrate with Apollo, ZoomInfo, or other providers.

Strengths. Instantly publishes transparent pricing and offers month-to-month contracts. The platform includes unlimited email accounts and warm-up automation in all plans. Instantly integrates with Zapier, Salesforce, and HubSpot.

G2 reviewers cite deliverability as Instantly's primary strength. One reviewer noted, "Our open rates jumped 15% after switching to Instantly. The warm-up automation works."

Gaps. Instantly is email-only. The platform does not support professional networks, phone, SMS, or inbound call handling. AI personalization is limited to basic merge fields and template suggestions.

Some users report aggressive auto-renewal policies. One G2 reviewer flagged, "Instantly auto-renewed our annual plan without warning, and customer support took three weeks to process a refund."

Pricing model. Growth plan: $30 per month (month-to-month). Hypergrowth plan: $77.60 per month (billed annually). Enterprise pricing is custom. Instantly does not charge per contact or per email sent; all plans include unlimited sending.

Lemlist

Lemlist is a multi-channel outreach platform with email, professional networks, and phone capabilities. The tool includes AI writing assistance, image and video personalization, and basic CRM sync. Lemlist does not include a native contact database; users must import lists or integrate with third-party providers.

Strengths. Lemlist supports email, professional networks, and phone in a single platform. The tool includes advanced personalization features (dynamic images, custom landing pages, video messages). Lemlist integrates with Salesforce, HubSpot, and Pipedrive.

G2 reviewers cite ease of use and creative personalization options as strengths. One reviewer noted, "Lemlist's image personalization helped us stand out in crowded inboxes."

Gaps. Lemlist does not natively handle inbound phone calls or SMS. AI personalization is template-based; dynamic content generation is limited. Some users report deliverability issues when sending high volumes.

Multiple G2 reviewers cite customer support delays. One reviewer flagged, "Support took five days to respond to a critical deliverability issue."

Pricing model. Email Outreach plan: $59 per user per month (billed annually). Sales Engagement plan: $99 per user per month (billed annually). Custom pricing for enterprise. Lemlist does not charge per contact or per email sent.

Smartlead

Smartlead is an email deliverability platform with inbox rotation and warm-up automation. The tool focuses on high-volume cold email campaigns and includes basic AI writing assistance. Smartlead does not include a native contact database.

Strengths. Smartlead publishes transparent pricing and offers unlimited email accounts in all plans. The platform includes warm-up automation, reply detection, and basic CRM sync. Smartlead integrates with Zapier and webhooks.

G2 reviewers cite deliverability and inbox rotation as primary strengths. One reviewer noted, "Smartlead kept our emails out of spam even at 10,000 sends per day."

Gaps. Smartlead is email-only. The platform does not support professional networks, phone, SMS, or inbound call handling. AI personalization is limited to basic merge fields.

Some users report unclear billing practices. One G2 reviewer flagged, "Smartlead charged us for an extra month after we cancelled, and it took three weeks to get a refund."

Pricing model. Basic plan: $39 per month. Pro plan: $94 per month. Custom pricing for enterprise. Smartlead does not charge per contact or per email sent; all plans include unlimited sending.

Reply.io

Reply.io is a multi-channel sales engagement platform with email, professional networks, phone, and SMS capabilities. The tool includes AI writing assistance, reply detection, and CRM sync. Reply does not include a native contact database; users must import lists or integrate with third-party providers.

Strengths. Reply supports email, professional networks, phone, and SMS in a single platform. The tool includes advanced sequencing (conditional logic, A/B testing, multi-touch campaigns). Reply integrates with Salesforce, HubSpot, and Pipedrive.

G2 reviewers cite multi-channel capabilities and ease of use as strengths. One reviewer noted, "Reply's professional networks automation saved us 10 hours per week."

Gaps. Reply does not natively handle inbound phone calls. AI personalization is template-based; dynamic content generation is limited. Some users report deliverability issues when sending high volumes.

Multiple G2 reviewers cite pricing increases and unclear contract terms. One reviewer flagged, "Reply raised our price by 30% at renewal without warning."

Pricing model. Starter plan: $60 per user per month (billed annually). Professional plan: $90 per user per month (billed annually). Custom pricing for enterprise. Reply does not charge per contact or per email sent.

SalesLoft

SalesLoft is an enterprise revenue orchestration platform with email, professional networks, phone, and SMS capabilities. The tool includes AI writing assistance, conversation intelligence, and deep CRM integration. SalesLoft does not include a native contact database.

Strengths. SalesLoft competes directly with Outreach in the enterprise segment. The platform includes advanced analytics, forecasting, and native integrations with Salesforce and Microsoft Dynamics. SalesLoft supports voice dialing for human reps but does not automate inbound qualification with AI.

G2 reviewers cite conversation intelligence and analytics as strengths. One reviewer noted, "SalesLoft's call recording and AI summaries helped our team close 20% more deals."

Gaps. SalesLoft does not natively handle inbound phone calls with AI. The platform requires multi-month implementations and custom integrations for complex workflows. Pricing is not published; third-party sources estimate enterprise contracts start around $100 per user per month.

Multiple G2 reviewers cite high costs and aggressive upsell tactics. One reviewer flagged, "SalesLoft quoted us $150 per user per month, then added $30,000 in onboarding fees."

Pricing model. Custom quote. Estimated starting price is $100–$150 per user per month based on third-party benchmarking. SalesLoft typically requires 12-month commitments.

Amplemarket

Amplemarket is an AI-powered sales platform with email, professional networks, and phone capabilities. The tool emphasizes AI personalization and intent signals. Amplemarket includes a contact database, though the size and verification methodology are not publicly disclosed.

Strengths. Amplemarket includes AI-generated messaging based on prospect signals (job changes, funding rounds, company news). The platform integrates with Salesforce and Hubspot. G2 reviewers cite personalization depth as a primary strength.

Gaps. Amplemarket does not natively handle inbound phone calls or SMS. Pricing is not published; third-party sources estimate mid-market contracts start around $15,000 annually. Some users report data accuracy issues, particularly for international contacts.

Pricing model. Custom quote. Estimated starting price is $1,200–$1,500 per month based on third-party benchmarking. Contract length and cancellation terms are not publicly disclosed.

Regie.ai

Regie.ai is an AI content generation platform with email sequencing and CRM sync. The tool focuses on AI-generated messaging and does not include a native contact database. Regie supports email and professional networks; phone and SMS capabilities are limited.

Strengths. Regie.ai generates email copy, professional networks messages, and call scripts using GPT-based models. The platform integrates with Salesforce, and HubSpot. G2 reviewers cite content quality as a primary strength.

Gaps. Regie.ai does not natively handle inbound phone calls, SMS, or direct mail. The platform requires users to import contact lists from external sources. Pricing is not published; third-party sources estimate mid-market contracts start around $10,000 annually.

Pricing model. Custom quote. Estimated starting price is $800–$1,200 per month based on third-party benchmarking. Contract length and cancellation terms are not publicly disclosed.

Which AI SDR platform is best for different types of sales teams?

What are the biggest risks and limitations of AI SDR software?

Data quality and contact accuracy. Contact databases degrade over time. Apollo.io and 11x verify contacts using multiple sources, but no platform guarantees 100% accuracy. Buyers should test data quality during pilots and negotiate refunds or credits for invalid contacts.

Over-reliance on email (single-channel risk). Email-only platforms (Instantly.ai, Smartlead) create single-channel risk. If deliverability drops or inboxes tighten spam filters, campaigns fail. Multi-channel platforms (11x, Reply.io, Lemlist) reduce risk by spreading outreach across email, professional networks, phone, and SMS.

Personalization depth vs. template fatigue. Template-based personalization (merge fields, conditional logic) is table stakes. Dynamic personalization (AI-generated messaging based on prospect signals) separates production-grade systems from pilot-stage tools. Buyers should test personalization depth during pilots and verify that AI-generated messages pass the "sounds human" test.

Compliance risk (CAN-SPAM, GDPR, TCPA). Cold email and phone outreach carry legal risk. Platforms must include unsubscribe links, honor opt-outs, and maintain do-not-call lists. SOC-2 Type II certification and GDPR compliance are non-negotiable for enterprise buyers. Buyers should verify certifications and audit compliance features before deploying.

Integration complexity and CRM sync issues. Most platforms integrate with Salesforce and HubSpot, but sync quality varies. Some platforms sync only email activity; others sync professional networks, phone, and SMS. Buyers should map integration requirements and test sync reliability during pilots.

Pricing opacity and contract lock-in. Custom-quote-only models signal either enterprise positioning or pricing inconsistency. Buyers should negotiate contract length, cancellation terms, and data portability before signing. Multiple G2 reviewers cite auto-renewal clauses and unclear cancellation windows as friction points.

Customer support and onboarding gaps. Email-only support works for technical users. Phone and Slack support reduce downtime for revenue-critical systems. Buyers should verify support SLAs and whether dedicated CSMs are included or sold separately.

How to evaluate AI SDR software for your team

Define your ICP and channel mix requirements. Map which channels (email, professional networks, phone, SMS) your ICP responds to. Email-only platforms work for digital-first buyers. Multi-channel platforms fit ICPs that require phone or professional network outreach.

Audit your existing contact data and CRM hygiene. Platforms with native contact databases (11x, Apollo.io) reduce dependency on third-party data providers. Platforms without native databases (Clay, Instantly.ai, Smartlead) require clean contact lists and CRM hygiene.

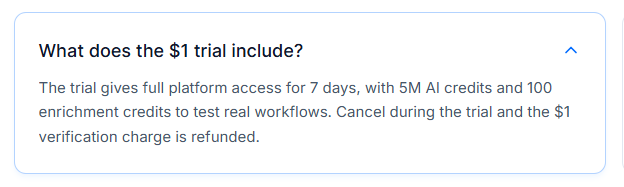

Test deliverability and personalization depth in pilots. Run 30-day pilots with 500–1,000 contacts. Measure open rates, reply rates, and deliverability. Test whether AI-generated messages pass the "sounds human" test.

Validate compliance and security certifications. Verify SOC-2 Type II, GDPR compliance, and CAN-SPAM adherence. Audit unsubscribe workflows, do-not-call lists, and data retention policies.

Map integration requirements and workflow dependencies. Test CRM sync reliability. Verify that the platform syncs all activity (email, professional networks, phone, SMS) back to Salesforce or HubSpot. Map workflow dependencies (e.g., does the platform trigger Slack alerts or webhook events?).

Model total cost of ownership (licensing, onboarding, maintenance). Add up licensing fees, onboarding costs, data credits, and overage charges. Factor in the cost of dedicated CSMs or technical support. Compare total cost of ownership across platforms.

Negotiate contract terms (length, cancellation, data portability). Negotiate contract length, auto-renewal clauses, and cancellation windows. Verify data portability (can you export contact lists and activity logs if you cancel?). Negotiate refunds or credits for invalid contacts.

Frequently asked questions

What is the difference between an AI SDR and a sales engagement platform?

AI SDR platforms automate prospecting, outreach, and qualification tasks traditionally performed by human SDRs. Sales engagement platforms (SalesLoft) orchestrate multi-channel workflows for human reps but do not replace SDR headcount. By 2026, the line between the two categories blurred. SalesLoft added AI writing assistants; pure-play AI SDR vendors like 11x added enterprise workflow features.

Can AI SDR software replace human SDRs entirely?

AI SDR platforms can handle high-volume outbound prospecting, email sequencing, and basic qualification. They cannot handle complex objection handling, multi-stakeholder negotiations, or relationship-building that requires human judgment. Most teams use AI SDRs to augment human capacity, not replace it entirely. 11x is the only platform that automates inbound phone qualification with AI (Julian), which reduces the need for human SDRs on inbound speed-to-lead workflows.

How do AI SDR platforms handle GDPR and CAN-SPAM compliance?

Platforms must include unsubscribe links in every email, honor opt-outs within 10 business days, and maintain do-not-call lists for phone outreach. SOC-2 Type II certification and GDPR compliance are non-negotiable for enterprise buyers. Buyers should verify certifications and audit compliance features before deploying. 11x is SOC-2 Type II compliant with end-to-end encryption.

What is the typical ROI timeline for AI SDR software?

ROI timelines vary by deployment model. Self-serve platforms (Apollo.io, Instantly.ai) ship in days but require teams to manage campaigns manually. White-glove platforms (11x, SalesLoft) take 30 to 90 days to deploy but include dedicated onboarding and strategic guidance. Most teams see positive ROI within 90 days if the platform is deployed correctly.

Do AI SDR platforms integrate with Salesforce and HubSpot?

Most platforms integrate with Salesforce and HubSpot, but sync quality varies. Some platforms sync only email activity; others sync professional networks, phone, and SMS. Buyers should test sync reliability during pilots and verify that the platform syncs all activity back to the CRM without manual intervention.

How accurate are the contact databases in AI SDR platforms?

Contact databases degrade over time. Apollo.io and 11x verify contacts using multiple sources, but no platform guarantees 100% accuracy. Buyers should test data quality during pilots and negotiate refunds or credits for invalid contacts. Third-party benchmarking reports estimate contact accuracy ranges from 70% to 90% depending on the provider and contact type.

Can AI SDR software handle inbound lead qualification?

Most platforms are outbound-only. 11x is the only major platform that natively handles inbound phone calls with AI (Julian). Julian qualifies leads, routes to human reps, and syncs activity back to Salesforce or HubSpot. Competitors require integrations with third-party dialers or manual phone handling.

What is the difference between 11x Alice and competitors like Apollo?

11x unifies outbound (Alice) and inbound voice (Julian) in a single platform. Alice handles email, professional networks, and multi-channel sequences in 105+ languages. Julian automates inbound phone qualification and routing. 11x includes a native 400M+ verified contact database. Apollo focus on outbound email and professional networks; neither automates inbound phone calls with AI. Apollo includes a 275M contact database but does not support inbound voice.

How much does AI SDR software cost in 2026?

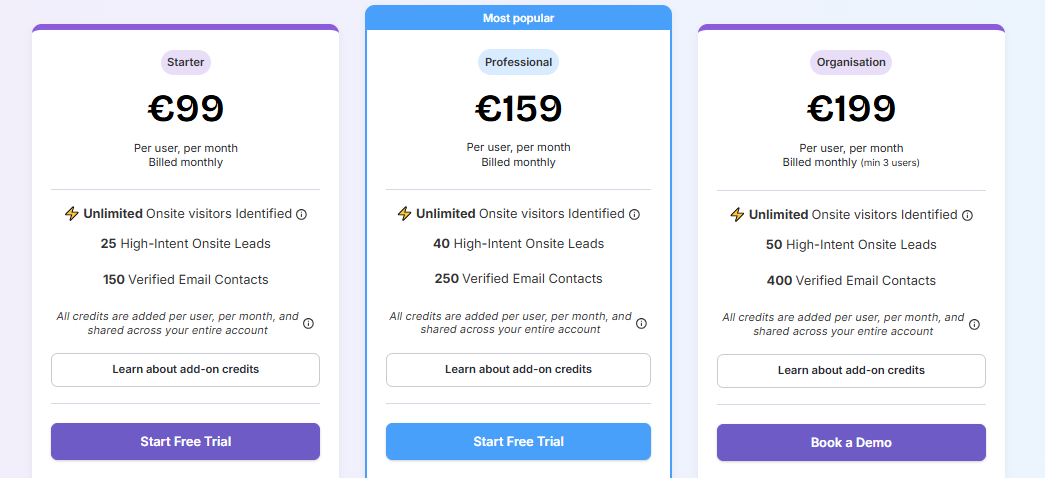

Pricing varies widely. Email-first platforms (Instantly.ai, Smartlead) start around $30–$40 per month. Multi-channel platforms (Apollo.io, Lemlist, Reply.io) range from $50–$100 per user per month. Enterprise platforms (SalesLoft, 11x) require custom quotes; third-party benchmarking estimates suggest $100–$150 per user per month for SalesLoft. 11x pricing is custom based on contact volume and deployment scope.

What are the biggest risks when deploying AI SDR software?

The biggest risks are data quality issues, compliance violations, and over-reliance on single-channel outreach. Buyers should test data quality during pilots, verify SOC-2 Type II and GDPR compliance, and deploy multi-channel platforms to reduce single-channel risk. Contract lock-in and unclear cancellation terms are also common friction points; buyers should negotiate contract length and data portability before signing.

![10 Best Knock AI Alternatives & Competitors [2026]](https://cdn.prod.website-files.com/6506fc5785bd592c468835e0/69ffb4570184db8ebc8eadd6_knock%20ai%20alternatives.png)

![10 Best MarketBetter Alternatives & Competitors [2026]](https://cdn.prod.website-files.com/6506fc5785bd592c468835e0/69f518317a132616ee9c03bc_marketbetter%20alternatives.png)

![10 Best Leadpipe Alternatives & Competitors [2026]](https://cdn.prod.website-files.com/6506fc5785bd592c468835e0/69ec6ab4fdf14caa3d8cb57d_10%20Best%20Leadpipe%20Alternatives%20%26%20Competitors.png)